Consonance Processing in the Absence of Relevant Experience: Evidence from Nonhuman Animals

Consonance Processing in the Absence of Relevant Experience: Evidence from Nonhuman Animals

Juan M. Toro

ICREA

Universitat Pompeu Fabra

Paola Crespo-Bojorque

Universitat Pompeu Fabra

Reading Options:

Continue reading below, or:

Read/Download PDF | Add to Endnote

Abstract

Consonance is a major feature in harmonic music that has been related to how pleasant a sound is perceived. Consonant chords are defined by simple frequency ratios between their composing tones, whereas dissonant chords are defined by more complex frequency ratios. The extent to which such simple ratios in consonant chords could give rise to preferences and processing advantages for consonance over dissonance has generated much research. Additionally, there is mounting evidence for a role of experience in consonance perception. Here we review experimental data coming from studies with different species that help to broaden our understanding of consonance and the role that experience plays on it. Comparative studies offer the possibility of disentangling the relative contributions of species-specific vocalizations (by comparing across species with rich and poor vocal repertoires) and exposure to harmonic stimuli (by comparing populations differing in their experience with music). This is a relative new field of inquiry, and much more research is needed to get a full understanding of consonance as one of the bases for harmonic music.

Keywords: consonance, interval ratios, vocal learning, rats

Author Note: Juan M. Toro, Universitat Pompeu Fabra, C. Ramon Trias Fargas, 25-27, 08005, Barcelona, Spain.

Correspondence concerning this article should be addressed to Juan M. Toro at juanmanuel.toro@upf.edu.

Acknowledgments: This research was funded by the ERC Starting Grant contract number 312519. We thank the contributions of three anonymous reviewers who greatly contributed to improve this article.

Introduction

In this review we describe experimental work studying the underlying mechanisms involved in the perception of consonance. More specifically, we focus on data exploring whether innate auditory constraints give rise to consonance perception and whether the complexity of species-specific auditory signals (and presumably the perceptual mechanisms that support them) impact consonance perception. Consonance is one of the most salient features of harmonic music, and it has been associated with pleasantness. More specifically, here we describe how experimental research with nonhuman animals can make important contributions to our understanding of how experience might modulate consonance processing. The term consonance comes from the Latin consonare,meaning “sounding together.” In Western music a smooth-sounding combination of tones is considered to be consonant (pleasant), whereas a harsh-sounding harmonic combination is considered dissonant (unpleasant). Consonant intervals are usually described as more pleasant, euphonious, and beautiful than dissonant intervals, which are perceived as unpleasant, discordant, or rough (Plomp & Levelt, 1965). Thus, the terms consonance and dissonance make reference to the degree of pleasantness or stability of a musical sound as perceived by an individual.

One of the most widespread explanations for the perceptual phenomena of consonance and dissonance is related to the simplicity of the frequency ratios between the tones composing a chord. In Western musical traditions, the earliest associations between consonance and simple frequency ratios are attributed to Pythagoras. The simpler the ratio between two notes, the more consonant the sound. For example, the frequency ratio between the two notes composing an octave is 1:2. Conversely, the more complex the ratio between two notes, the more dissonant the sound. For example, the frequency ratio between the two notes composing a tritone is 32:45 (see Table 1). Many studies suggest that in fact there is a connection between the complexity of frequency ratios and innate auditory constraints that give rise to consonance perception. As explained by Bidelman and Heinz (2011), consonant intervals contain only a few frequencies that pass through the same critical bandwidth of the auditory filters in cochlear mechanisms. This creates pleasant percepts that contrast with those emerging from a higher number of frequencies competing within individual channels that are presented in dissonant intervals.

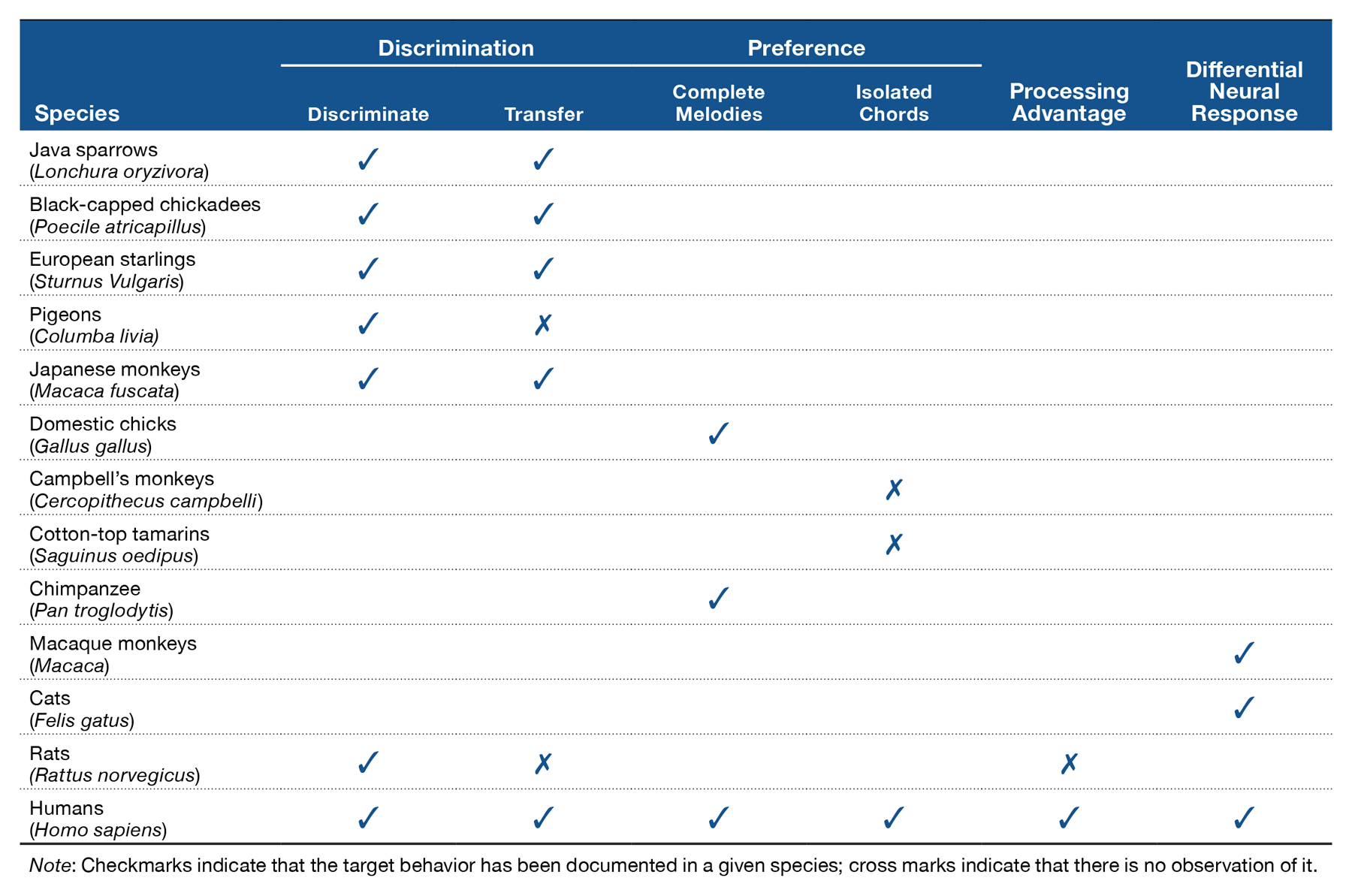

Table 1. Consonance Ordering for Two-Tone Intervals from Helmholtz (1877) Decreasing in order of “Perfection” from the Most Consonant to the Most Dissonant.

The relation between ratio complexity and auditory-processing mechanisms that are widely shared across species has reinforced innate consonance perception hypotheses. There are in fact various sources of evidence suggesting strong biological constraints in the processing of consonance. Studies across different periods and different countries have reported similar judgments about the degree of consonance over tone combinations of the chromatic scale, with some tone combinations consistently ranked as consonant and others consistently ranked as dissonant. More important, musical traditions from disparate cultures often make use of many of the exact same intervals and scales (e.g., Burns, 1999; Gill & Purves, 2009), suggesting an overall preference for certain sound combinations over others. Furthermore, from a developmental perspective, infants have been shown to prefer consonance to dissonance (e.g., Trainor & Heinmiller, 1998; Trainor, Tsang, & Cheung, 2002; Zentner & Kagan, 1998), and newborns have been observed to react differently to consonant and dissonant versions of melodies (Perani et al., 2010; see also Masataka, 2006).

However, recent studies have challenged the universality of consonance judgments and suggest a central role for experience in the development of consonant preferences. A series of experiments showed that chord familiarity modulates consonance ratings. If listeners are trained to match the pitches of two-note chords, they will rate these chords as less dissonant than untrained pitches independent of their tune (McLachlan, Marco, Light, & Wilson, 2013). A study with 6-month-old infants showed that after a short preexposure to consonant or dissonant stimuli, infants do not show a preference for consonance over dissonance. Infants paid more attention to the stimuli to which they were preexposed (the familiar stimulus), independent of whether it was consonant or dissonant (Plantinga & Trehub, 2014). A study with members of a native Amazonian society provided additional empirical support to the idea that experience plays a pivotal role in preferences for consonance (McDermott, Schultz, Undurraga, & Godoy, 2016). The authors compared ratings of the pleasantness of sounds between populations from the United States and three populations from Bolivia, including indigenous participants with presumably little exposure to Western music. Results showed cross-cultural variations, such that participants in the United States showed clear consonance preferences but indigenous Bolivian participants did not. The results thus suggested that consonance preferences might not emerge universally across cultures but rather from long-term exposure to a tonal system in which consonance is central to harmonic music (see also Fritz et al., 2009).

A Comparative Approach to Consonance Processing

Comparative work is central to exploring questions about the initial state of knowledge of music and how this initial state is transformed by relevant experience. Experiments testing nonhuman animals are relevant in this field for two reasons. First, musical exposure can be carefully controlled under laboratory conditions. Unlike experiments with human adults and infants, animals can be deprived from any musical stimuli. Thus, any music-related perceptual biases found in animals cannot be the result of musical exposure. Second, because animals do not produce music, any musical biases exhibited by them would presumably reflect general auditory mechanisms not specific to music (e.g., McDermott & Hauser, 2005). Hence, the comparative approach can provide data that would be challenging to obtain in other ways.

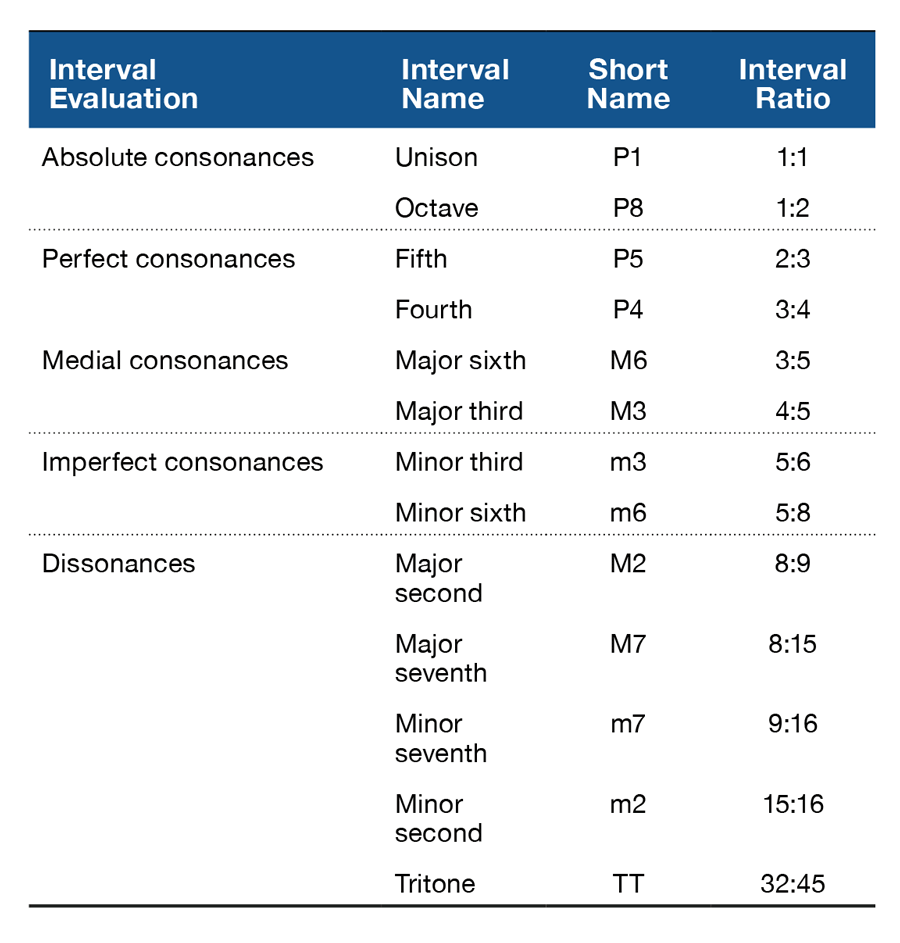

Studies exploring the perception of consonance and dissonance in nonhuman animals have been limited, with only a small variety of species tested so far. However, research tackling consonance perception in nonhuman animals has explored the phenomenon from different perspectives, including discrimination, preference, and neurophysiological studies (see Table 2). Discrimination studies have tested the perception of isolated consonant and dissonant chords primarily in avian and primate species. In these studies, Java sparrows (Watanabe, Uozumi, & Tanaka, 2005) and Japanese macaques (Izumi, 2000) were trained to discriminate between consonant and dissonant chords (e.g., the octave and the major seventh in the experiment with macaques, and triadic chords in the case of sparrows). Both species successfully discriminated consonance from dissonance and transferred the learned behavior to other sounds with novel frequencies. Successful discrimination of chords based on relative pitch changes has also been reported in black-capped chickadees (Hoeschele, Cook, Guillette, Brooks, & Sturdy, 2012), pigeons (Brooks & Cook, 2009), and European starlings (Hulse, Bernard, & Braaten, 1995). Neurophysiological studies corroborated the ability of animals to discriminate musical intervals based on consonance (for a review, see Bidelman, 2013). Different neural responses for consonant and dissonant stimuli have been observed in the auditory nerve (Tramo, Cariani, Delgutte, & Braida, 2001) and inferior colliculus (McKinney, Tramo, & Delgutte, 2001) of cats, as well as in the primary auditory cortex of monkeys (Fishman et al., 2001). Thus, with proper training, different species seem to be able to tell apart consonant from dissonant chords.

Beyond perceptual differences, studies have also revealed spontaneous preferences for consonance over dissonance in nonhuman animals. In these studies, newly hatched domestic chicks (Chiandetti & Vallortigara, 2011) and an infant chimpanzee (Sugimoto et al., 2010) were presented with consonant and dissonant versions of complete melodies. Results showed that chicks preferentially approached a visual imprinting object associated with consonant melodies over an identical object associated with dissonant melodies. Similarly, the infant chimpanzee consistently produced, with the aid of a computerized setup, consonant versions of melodies for longer periods than dissonant versions of those same melodies. However, no preferences have been observed in other species when tested with isolated consonant and dissonant chords. McDermott and Hauser (2004) tested cotton-top tamarins in a V-shaped maze and found that the animals spent the same amount of time next to a loudspeaker presenting consonant sounds as to one presenting dissonant sounds. Similarly, Campbell’s monkeys equally approached two opposing sides of an experimental room that produced consonant or dissonant sounds (Koda et al., 2013). Thus, neither tamarins nor Campbell’s monkeys show any preference for consonance over dissonance. However these animals did have preferences for some features of acoustic stimuli such as softness over loudness. Further studies should explore the possibility that differences observed across studies on consonance preferences might be linked to the type of stimuli used in the experiments. Preferences might be observed only when stimuli include complete melodies but not when the stimuli are isolated chords. If it is confirmed that preference for consonance in animals is observed only for complete melodies and not for isolated chords, this would be a difference with respect to humans, which in many studies have been shown to have preferences for single consonant chords over single dissonant chords (e.g., Butler & Daston, 1968; Trainor & Heinmiller, 1998). Other than stimulus type (complete melodies vs. isolated chords), it would be interesting to explore the differences observed across experiments that could be due to testing of adult versus young animals, or to the specific experimental methodology used (e.g., place preference vs. joystick vs. imprinting). A final factor worth exploring is the ecological relevance of the stimuli, and possible stress induced by separation of animals from their social group.

Together these findings demonstrate that at least some sensitivity to consonance is not uniquely human. However, research in this area has just begun, and much work is still needed to understand all the factors underlying the perception of consonance. For instance, most of the results come from avian species that have a complex vocal system that involve the production and perception of relatively long sequences of harmonic sounds. It is thus important to explore the role of vocal production in consonance perception. Producing complex vocalizations has been considered a constraint for the structure of music (Merker, Morley, & Zuidema, 2015). Thus, testing species with no vocal learning abilities could shed light on whether consonance perception is affected by production. At the same time, experimental work on species other than primates might provide information regarding analogies in the emergence of traits necessary for musical processing.

Consonance Perception in a Rodent

Recent studies with rats have explored whether the perception of consonance in animals that lack relevant experience (in terms of vocal production and exposure to harmonic stimuli) resembles that of humans. The rat (Rattus norvegicus) is a species in which there is no evidence of vocal learning (e.g., Litvin, Blanchard, & Blanchard, 2007) that produces at least three classes of ultrasonic vocalizations, with both negative and positive related affective states. Although some of their calls have harmonic components (e.g., Brudzynski & Fletcher, 2010), the rats lack long-term exposure to complex harmonic sounds (as those produced by songbirds) and to musical stimuli prior to the experiments when they are reared and tested under controlled laboratory conditions.

In a series of experiments, rats were tested on their ability to properly perceive and discriminate consonance from dissonance (Crespo-Bojorque & Toro, 2015). Animals were trained to discriminate sequences of three consonant chords (e.g., the octave [P8], the fourth [P4], and the fifth [P5]) from sequences of three dissonant chords (e.g., the tritone [TT], minor ninth [m9], and minor second [m2]). The animals received reinforcement (sweet pellets) for their responses (pressing a lever in a response box) after the presentation of consonant chords but not after the presentation of dissonant chords. Of importance, to make sure that the principal cue for the discrimination task was the interval ratios between the tones composing the chords and not their absolute pitch, stimuli were created in three octaves. After training there was a test phase. In the test phase, rats were presented with sequences containing new consonant (e.g., major third [M3]) and dissonant chords (e.g., major seventh [M7]) implemented at novel octaves not used during training (thus, fundamental frequency of stimuli was different from training to test). The responses to the novel consonant and dissonant stimuli were then registered. Results showed that rats successfully learned to discriminate consonant from dissonant sequences during training. They pressed the lever more often after consonant than after dissonant chords. However, the animals were not able to generalize such discrimination to sequences containing new consonant and dissonant chords presented during the test. There were no differences in responses to novel stimuli. This failure to generalize suggested that the animals might not be learning a categorical difference between consonance and dissonance. Rather, rats might be learning to discriminate just the specific sounds presented during training. Once the properties of these sounds change (in terms of absolute frequency) by being implemented in a different octave, the rats cannot discriminate among them (for a similar result with speech stimuli, see Toro & Hoeschele, in press).

A follow-up experiment explored whether the rats were in fact organizing the target stimuli around categories of consonance and dissonance or were only memorizing the specific stimuli presented during training. Rats were tested on their ability to discriminate between two sets of dissonant stimuli and generalize them to novel octaves. Thus, in this experiment, stimuli differed in the interval ratios between tones but not in terms of consonance and dissonance. Stimuli presented during the test were the same set of chords used during training, but they were implemented in novel octaves (so the interval ratios between the tones were the same but the absolute frequencies were different). Results were very similar to the ones observed in the previous experiment. Rats learned to discriminate stimuli during training, so they were able to discern two sets of dissonant chords. However, there was no indication that the animals generalized the discrimination to novel items. This result was even more striking because the stimuli from training to test differed only in their absolute frequencies and not in terms of the interval ratios between tones. The results from these two experiments suggested that the animals might be memorizing the specific items presented during discrimination training. But there is no evidence that the animals were creating categories in terms of consonance and dissonance that could be extrapolated to stimuli in different octaves. The fact that rats failed to generalize the learned discrimination to chords implemented at different frequency ranges suggests that this rodent species might be facing difficulties while performing whole octave transpositions.

In contrast with the lack of generalization across octaves observed in the rats, previous research has shown that adult humans easily perform whole octave transpositions (e.g., Hoeschele, Weisman, & Sturdy, 2012). In fact, when human participants were tested with exactly the same stimuli presented to rats, they succeeded to generalize to new consonant or dissonant sequences and to different octaves. Thus, when faced with the same stimuli as rats, humans performed whole octave transpositions without much difficulty (Crespo-Bojorque & Toro, 2015). There are thus limitations that rats face while processing sounds in terms of consonance and dissonance. A major one seems to be their difficulties creating categories that can be generalized to novel stimuli. In contrast, the creation of such categories does not seem to be a major problem for consonance processing in humans. One of the reasons for this difference could be the lack of extensive exposure to harmonically complex sounds in the rats both in terms of production of interspecific vocalizations and experience with harmonic music (see the following).

Processing Advantages

In contrast with rats, humans seem to be able to use consonance and dissonance as different categories that inform perceptual decisions. In fact, processing differences between consonant and dissonant chords have also been identified in both human adults (Komeilipoor, Rodger, Craig, & Cesari, 2015; Schellenberg & Trehub, 1994) and infants (Schellenberg & Trehub, 1996). Consonant chords and melodies seem to be better processed than dissonant ones. In the studies by Schellenberg and Trehub (1994, 1996), adult and infant participants found it easier to detect changes in patterns when they were implemented in acoustic stimuli composed of consonant intervals compared to dissonant intervals. Similarly, in a recent study, Komeilipoor and colleagues (2015) found that participants’ performance in a movement synchronization task, using a finger-tapping paradigm, was better after the presentation of consonant stimuli than after the presentation of dissonant stimuli. Results showed a higher percentage of movement coupling and a higher degree of movement circularity after the exposure to consonant sounds than to dissonant sounds. Thus, several experiments suggest that aesthetic preferences for consonance seem to be also linked to processing advantages.

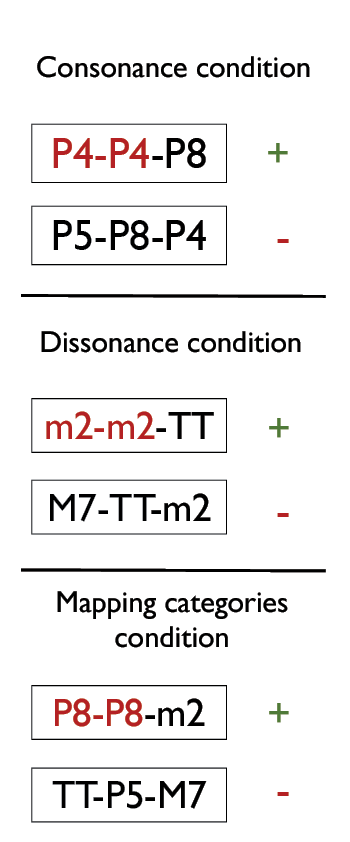

It might be the case that simple ratios defining consonant intervals facilitate processing by favoring their detection, storage, and retrieval. If so, the roots of the consonant advantage observed in humans would be a product of the physical properties of consonant chords (for a recent review of relevant literature, see Bidelman, 2013). A recent study used a comparative approach to explore whether the processing benefits for consonance could also be observed in other species (Crespo-Bojorque & Toro, 2016). If the processing advantages observed in humans are a result of the physical properties of consonant chords, it is possible that these advantages could also be observed in nonhuman animals. In the study, rats and humans were trained to produce responses (lever presses and button press, respectively) after the presentation of chord sequences following an abstract AAB pattern but withhold responses after an ABC pattern (where A, B, and C represent different chords). After the training phase, they were tested on their ability to discriminate novel AAB and ABC sequences. Experiments tested rule learning and generalization with sequences containing consonant chords (e.g., AAB: P8-P8-P5; ABC: P8-P5-P4; see Table 1), dissonant chords (e.g., AAB: TT-TT-m2; ABC: TT-m2-M7; see Table 1), or a combination of consonant and dissonant chords in a sequence where consonance would always be mapped to A positions in the sequence, whereas dissonance would always be mapped to B positions in the sequence (e.g., AAB: P8-P8-TT; see Figure 1).

Figure 1. Schematic representation of the three experiments testing processing advantages for consonance in both human and nonhuman animals. Note. Reinforced sequences (+) always followed an AAB pattern, whereas nonreinforced sequences (–) followed an ABC pattern. In the consonance condition, all intervals were consonant (P4, P5, P8). In the dissonant condition, all intervals were dissonant (m2, M7, TT). In the mapping categories condition, consonant intervals were always used in the A position of the sequence and dissonant intervals were always used in the B position of the sequence.

Both rats and human participants succeeded at discriminating and generalizing the abstract auditory rules in all the experiments. They both learned abstract rules over sequences of tones. This result suggests that the computational mechanism(s) required for performing such generalizations is present in both species. However, human participants’ performance was significantly better when the target sequences included consonant chords (as in P8-P8-P5) than when the sequences included dissonant chords (as in TT-TT-m2). In contrast, rats showed no differences across experiments. Their performance did not significantly change depending on whether the rules were implemented with consonant or dissonant chords. Furthermore, when the abstract structure was mapped to consonant and dissonant chords (such that A tokens in the AAB structure would always be consonant and B tokens would always be dissonant), humans’ performance in the rule-learning task was improved, showing an additional advantage over both consonant and dissonant sequences. This additional advantage suggested that consonance and dissonance act as categorical anchors for humans, thereby facilitating the discrimination of elements in the structure. In sharp contrast with the human results, there was no evidence that the rats benefited from this mapping between consonance and categories within a structure. Thus, although rats were able to learn and generalize the abstract rules, the difference between consonance and dissonance did not translate into a processing advantage for them (Crespo-Bojorque & Toro, 2016). Human participants on the contrary were able to use consonance contrasts to facilitate abstract rule extraction.

Experience and the Emergence of Consonance Preferences

Why did the rats not benefit from consonance and show improvement of their performance in the rule-learning task? As we mentioned earlier, there is a growing consensus that experience with harmonic stimuli plays a pivotal role in the development of the abilities supporting consonance processing (e.g., McLachlan et al., 2013). There are thus two types of experience that could be relevant for consonance processing that might explain why rats tested in the previous experiments did not benefit from consonance. One is the production of complex species-specific vocalizations, and the other is the exposure to harmonic stimuli. In species for which harmonic vocalizations play an important role in social behavior, the second possibility is subsumed by the first. In species in which harmonic vocalizations do not play an important role in social behavior, exposure to harmonic environmental sounds (or exposure under laboratory conditions) could provide experience with target sounds.

Although vocal production has been considered as an important constraint for the structure of music in general (Merker et al., 2015), few studies have been devoted to determining which features of music might be affected by this capacity (but see Bowling, Sundararajan, Han, & Purves, 2012; Gill & Purves, 2009; Juslin & Laukka, 2003). It has been suggested that the preference for consonance and its processing advantages might arise from the statistical structure of human vocalizations, the periodic acoustic stimuli to which humans are most exposed (Schwartz, Howe & Purves, 2003; Terhardt, 1984). The hypothesis is that extensive experience producing and perceiving harmonic sounds facilitates the emergence of consonance preferences (Bowling & Purves, 2015). Support for this hypothesis comes from studies showing that the consonance of intervals is predicted by ratios emphasized between harmonics in speech (e.g., Schwartz et al., 2003). Additional support could come from experiments showing good consonance processing in species that produce complex harmonic vocalizations and poor consonance processing in species with a more limited vocal repertoire. On the contrary, evidence against this hypothesis could come from experiments showing that species for which harmonic vocalizations play an important role in social behavior lack consonance preferences, or can be trained to prefer dissonance just as easily. In fact, preferences for consonance are affected at least to some degree by exposure to Western harmonic music in humans (McDermott et al., 2016). Thus, comparative experiments with nonhuman animals will certainly offer a more complete picture of this issue.

A complementary issue is whether producing complex harmonic vocalizations helps in the creation of sound categories along a consonance–dissonance continuum independently of the specific frequency of the individual intervals. There are indications that some birds can generalize to novel chords based on the consonance defining them (e.g., Hoeschele et al., 2012; Hulse et al., 1995; Watanabe et al., 2005; see Table 2). However, several experiments have reported strong limitations in nonhuman animals in their abilities to generalize across frequencies and perform pitch “transpositions” (an ability that would be pivotal for proper generalization across frequencies as it is observed in humans; see Patel, in press). European starlings (Bregman, Patel, & Gentner, 2012; Hulse & Cynx, 1985), rats (Crespo-Bojorque & Toro, 2015), pigeons (Brooks & Cook, 2009; see also Friedrich, Zentall & Weisman, 2007), and chickadees (Hoeschele, Weisman, Guillette, Hahn, & Sturdy, 2013) seem to be strongly constrained by absolute pitch in how they process acoustic stimuli, showing no evidence of generalization across octaves. This lack of generalization observed in both songbirds (starlings) and species with no documented vocal learning abilities (rats and pigeons) calls for further studies. It is necessary to explore the exact role that experience producing and perceiving harmonic sounds plays during the creation of categories around stimuli that vary in frequency ratios that would allow for proper generalization across octaves. As has been shown by Bregman, Patel, and Gentner (2016), birds (European starlings) rely on acoustic cues other than absolute pitch to generalize to novel stimuli. Instead of using the absolute frequency of the sounds, they prioritize their spectral shape. It would thus be interesting to further explore the cues that are used to identify and categorize novel acoustic stimuli.

Several studies have addressed the idea that the capacity to learn to produce complex sequences of vocalizations is at the root of important music-related abilities, such as rhythm perception (for a review, see Patel, 2014). At a neural level, the capacity for vocal learning has been linked to specialized neural circuitry supporting strong connections between primary auditory and motor pathways (Bolhuis, Okanoya, & Scharff, 2010). This circuitry presumably facilitates the coordination of perception and production, allowing species-specific vocalizations (songs in the case of birds, speech in the case of humans) to be efficiently learned and produced, and might form the basis of, for example, rhythm synchronization. Studies have shown that some avian species (budgerigars: Hasegawa, Okanoya, Hasegawa, & Seki, 2011; cockatoos: Patel, Iversen, Bregman, & Schulz, 2009) but not rhesus monkeys (Honing, Merchant, Háden, Prado, & Bartolo, 2012) have the capacity to synchronize to a beat. Honing and collaborators observed that the monkeys were not able to display synchronization to a sequence of regular beats at different tempi even after a long period of training. On the contrary, rhythm synchronization has been observed after training in a sea lion (see Cook, Rouse, Wilson, & Reichmuth, 2013). Similarly, the ability to produce and process highly complex harmonic stimuli might also contribute to the development of other important musically related abilities. For example, it might help in the creation of sound categories that are relevant while distinguishing consonant from dissonant sounds.

Work with humans across different ages and different cultures suggests that exposure to harmonic stimuli might be an important factor for the emergence of preferences for consonant sounds. As several experiments have demonstrated, preferences for consonance over dissonance are greatly influenced by preexposure to consonant stimuli (e.g., Plantinga & Trehub, 2014). Evidence from both neuroimaging and electrophysiological studies has shown that neural correlates for consonance and dissonance can change as a function of musical expertise. Musicians with extensive training have different brain activations for consonant and dissonant stimuli when compared to listeners with no formal musical training. As revealed from functional magnetic resonance imaging data, the areas of activation for consonant chords are right lateralized for nonmusicians and are much more bilateral for musicians (Minati et al., 2009). Likewise, in electroencephalogram studies, different event-related potential components were elicited from musicians and nonmusicians in response to consonant and dissonant intervals, suggesting that musicians discriminate intervals at earlier processing stages than nonmusicians (Proverbio & Orlandi, 2016; Regnault, Bigand, & Besson, 2001; Schön, Regnault, Ystad, & Besson, 2005). Thus, long-term exposure to harmonic stimuli not only helps in the development of aesthetic preferences for consonance but also modulates the brain responses that are triggered in response to consonant and dissonant chords (although see Bidelman, 2013).

Comparative experiments could provide much information that would help to clarify the role that experience with harmonic sounds actually plays for the emergence of consonance preferences. One could think of experiments in which rats are preexposed from birth to harmonic music and are tested later for their preferences to consonant and dissonant sounds. Such experiments would be telling regarding the extent to which experience determines consonance preferences. In the domain of language, comparative studies have advanced much of our understanding of the role that experience plays in some remarkable linguistic phenomena (for a review, see Toro, 2016). For example, with appropriate experience, nonhuman animals display categorical perception for speech sounds (Kuhl & Miller, 1975) and are able to use linguistic rhythm to tell languages apart (Ramus, Hauser, Miller, Morris, & Mehler, 2000), both abilities once thought to be uniquely human. Studies on consonance processing could paint a similar picture, showing that given enough experience, nonhuman animals might display consonance preferences for isolated chords.

As we have suggested before, exposure to harmonic music might be at the base of processing advantages for consonance (Komeilipoor et al., 2015; Schellenberg & Trehub, 1994, 1996). Preference for acoustic stimuli defined by simple frequency ratios between their composing tones could be a prerequisite to benefit from differences between consonance and dissonance as has been observed in human participants (Crespo-Bojorque & Toro, 2016). Experiments exploring the role of exposure to harmonic music on processing advantages for consonant chords could also test whether such exposure results in similar advantages in non-Western listeners who have not had massive exposure to popular Western music or for whom their own traditional music does not include harmonic tone combinations. Thus, interesting lines of work regarding the emergence of consonance preferences and advantages are still open and are very much worth exploring.

Conclusion

Like the ability for language, the ability for music has been documented in all human societies and has been claimed to be unique to our species. However, little is known about its evolutionary history and the cognitive mechanisms essential for perceiving and appreciating music (e.g., Patel, 2008). One way to advance our knowledge of the basic mechanisms that allow the emergence of the musical ability is to explore the initial state of music knowledge prior to experience and how relevant experience alters this state (Hauser & McDermott, 2003). In this article, we have shown how our understanding of consonance (a key feature in harmonic music) greatly benefits from experiments with nonhuman animals. Research suggests that, contrary to humans, rats do not seem to create categories grouping consonant and dissonant chords. This translates to observed difficulties while performing whole octave transpositions. Furthermore, the processing advantage for consonance over dissonance that has been documented in human listeners does not seem to be observed in nonhuman animals. The origins of such differences are still to be understood and could be linked to different sources of relevant experience. Comparative work across a wide range of species and musical traits is thus pivotal to advance in our understanding of our very special music ability and the different components that it involves.

References

Bidelman, G. M. (2013). The role of the auditory brainstem in processing musically relevant pitch. Frontiers in Psychology, 4, 264. doi:10.3389/fpsyg.2013.00264

Bidelman, G., & Heinz, M. (2011). Auditory-nerve responses predict pitch attributes related to musical consonance–dissonance for normal and impaired hearing. Journal of the Acoustical Society of America, 130, 1488–1502. doi:10.1121/1.3605559

Bolhuis, J., Okanoya, K., & Scharff, C. (2010). Twitter evolution: Converging mechanisms in birdsong and human speech. Nature Neuroscience, 11, 747–759. doi:10.1038/nrn2931

Bowling, D., & Purves, D. (2015). A biological rationale for musical consonance. Proceedings of the National Academy of Sciences, 112, 11155–11160. doi:10.1073/pnas.1505768112

Bowling, D., Sundararajan, J., Han, S., & Purves, D. (2012). Expression of emotion in Eastern and Western music mirrors vocalization. PLoS ONE 7:e31942. doi:10.1371/journal.pone.0031942

Bregman, M., Patel, A., & Gentner, T. (2012). Stimulus-dependent flexibility in non-human auditory pitch processing. Cognition, 122, 51–60. doi:10.1016/j.cognition.2011.08.008

Bregman, M., Patel, A., & Gentner, T. (2016). Songbirds use spectral shape, not pitch, for sound pattern recognition. Proceedings of the National Academy of Sciences, 113, 1666–1671. doi:10.1073/pnas.1515380113

Brooks, D., & Cook, R. (2009). Chord discrimination by pigeons. Music Perception, 27, 183–196. doi:10.1525/mp.2010.27.3.183

Brudzynsky, S. & Fletcher, N. (2010). Rat ultrasonic vocalization: Short-range communication. In Brudzynsky, S. (Ed) Handbook of Mammalian Vocalization. Elsevier.

Burns, E. M. (1999). Intervals, scales, and tuning. In D. Deustch (Ed.), The psychology of music (pp. 215–264). San Diego, CA: Academic Press. doi:10.1016/B978-012213564-4/50008-1

Butler, J., & Daston, P. (1968). Musical consonance as musical preference: A cross-cultural study. The Journal of General Psychology, 79, 129–142. doi:10.1080/00221309.1968.9710460

Chiandetti, C., & Vallortigara, G. (2011). Chicks like consonant music. Psychological Science, 22, 1270–1273. doi:10.1177/0956797611418244

Cook, P., Rouse, A., Wilson, M., & Reichmuth, C. (2013). A California sea lion (Zalophus californianus) can keep the beat: Motor entrainment to rhythmic auditory stimuli in a non vocal mimic. Journal of Comparative Psychology, 127, 412–427. doi:10.1037/a0032345

Crespo-Bojorque, P., & Toro, J. M. (2015). The use of interval ratios in consonance perception by rats (Rattus Norvegicus) and humans (Homo Sapiens). Journal of Comparative Psychology, 129, 42–51. doi:10.1037/a0037991

Crespo-Bojorque, P., & Toro, J. M. (2016). Processing advantages for consonance: A comparison between rats (Rattus Norvegicus) and humans (Homo Sapiens). Journal of Comparative Psychology, 130, 97–108. doi:10.1037/com0000027

Fishman, Y. I., Volkov, I. O., Noh, M. D., Garell, P. C., Bakken, H., Arezzo, J. C., … Setinschneider M. (2001). Consonance and dissonance of musical chords: neural correlates in auditory cortex of monkeys and humans. Journal of Neurophysiology, 86, 2761–2788.

Friedrich, A., Zentall, T., & Weisman, R. (2007). Absolute pitch: Frequency-range discriminations in pigeons (Columbia livia)—Comparisons with zebra finches (Taeniopygia guttata) and humans (Homo sapiens). Journal of Comparative Psychology, 121, 95–105. doi:10.1037/0735-7036.121.1.95

Fritz, T., Jentschke, S., Gosselin, N., Sammler, D., Peretz, I., Turner, R., … Koelsch, S. (2009). Universal recognition of three basic emotions in music. Current Biology, 19, 573–576. doi:10.1016/j.cub.2009.02.058

Gill, K. Z., & Purves, D. (2009). A biological rationale for musical scales. PloS One 4:e8144. doi:10.1371/journal.pone.0008144

Hasegawa, A., Okanoya, K., Hasegawa, T., & Seki, Y. (2011). Rhythmic synchronization tapping to an audio-visual metronome in budgerigars. Scientific Reports, 1, 120. doi:10.1038/srep00120

Hauser, M., & McDermott, J. (2003). The evolution of the music faculty: A comparative perspective. Nature Neuroscience, 6, 663–668. doi:10.1038/nn1080

Helmholtz, H. (1877/1954). On the sensations of tone as a physiological basis for the theory of music (Ellis A. translator), New York: Dover Publications.

Hoeschele, M., Cook, R., Guillette, L., Brooks, D., & Sturdy, C. (2012). Black-capped chickadee (Poecile articapillus) and human (Homo sapiens) chord discrimination. Journal of Comparative Psychology, 126,57–67. doi:10.1037/a0024627

Hoeschele, M., Weisman, R., Guillette, L., Hahn, A., & Sturdy, C. (2013). Chickadees fail standardized operant tests for octave equivalence. Animal Cognition, 16, 599–609. doi:10.1007/s10071-013-0597-z

Hoeschele, M., Weisman, R., & Sturdy, C. (2012). Pitch chroma discrimination, generalization and transfer tests of octave equivalence in humans. Attention, Perception and Psychophysics, 74, 1742–1760. doi:10.3758/s13414-012-0364-2

Honing, H., Merchant, H., Háden, G., Prado, L., & Bartolo, R. (2012). Rhesus monkeys (Macaca mulata) detect rhythmic groups in music, but not the beat. PloS One, 7, e51369. doi:10.1371/journal.pone.0051369

Hulse, S. H., Bernard, D. J., & Braaten, R. F. (1995). Auditory discrimination of chord-based spectral structures by European starlings. Journal of Experimental Psychology: General, 124, 409–423. doi:10.1037/0096-3445.124.4.409

Hulse, S. H., & Cynx, J. (1985). Relative pitch perception is constrained by absolute pitch in songbirds (Mimus, Molothrus, and Stumus). Journal of Comparative Psychology, 99, 176–196. doi:10.1037/0735-7036.99.2.176

Izumi, A. (2000). Japanese monkeys perceive sensory consonance of chords. Journal of the Acoustical Society of America, 108,3073–3078. doi:10.1121/1.1323461

Juslin, P., & Laukka, P. (2003). Communication of emotions in vocal expression and music performance: Different channels, same code? Psychological Bulletin, 129, 770–814. doi:10.1037/0033-2909.129.5.770

Koda, H., Basile, M., Oliver, M., Remeuf, K., Nagumo, S., Bolis-Heulin, C., & Lemasson, A. (2013). Validation of an auditory sensory reinforcement paradigm: Campbell’s monkeys (Cercopithecus campbelli)do not prefer consonant over dissonant sounds. Journal of Comparative Psychology, 127, 265–271. doi:10.1037/a0031237

Komeilipoor, N., Rodger, M., Craig, C., & Cesari, P. (2015). (Dis-) Harmony in movement: effects of musical dissonance on movement timing and form. Experimental Brain Research, 233, 1585–1595. doi:10.1007/s00221-015-4233-9

Kuhl, P., & Miller, J. (1975). Speech perception by the chinchilla: Voiced-voiceless distinction in alveolar plosive consonants. Science, 190, 69–72. doi:10.1126/science.1166301

Litvin, Y., Blanchard, C., & Blanchard, R. (2007). Rat 22 kHz ultrasonic vocalizations as alarm cries. Behavioural Brain Research, 182, 166–172. doi:10.1016/j.bbr.2006.11.038

Masataka, N. (2006). Preference for consonance over dissonance by hearing newborns of deaf parents and of hearing parents. Developmental Science, 9, 46–50. doi:10.1111/j.1467-7687.2005.00462.x

McDermott, J., & Hauser, M. D. (2004). Are consonant intervals music to their ears? Spontaneous acoustic preferences in a nonhuman primate. Cognition, 94, B1–B21. doi:10.1016/j.cognition.2004.04.004

McDermott, J., & Hauser, M. (2005). The origins of music: Innateness, uniqueness, and evolution. Music Perception: An Interdisciplinary Journal, 23, 29–59. doi:10.1525/mp.2005.23.1.29

McDermott, J., Schultz, A., Undurraga, E., & Godoy, R. (2016). Indifference to dissonance in native Amazonians reveals cultural variation in music perception. Nature, 535, 547–550. doi:10.1038/nature18635

McKinney, M., Tramo, M., & Delgutte, B. (2001). Neural correlates of musical dissonance in the inferior colliculus. In D. J. Breebart, A. J. M. Houtsma, A. Kohlrausch, V. F. Prijs, & R. Schoonhoven (Eds.), Physiological and psychophysical bases of auditory function (pp. 83–89). Maastricht, Germany: Shaker.

McLachlan, N., Marco, D., Light, N., & Wilson, S. (2013). Consonance and pitch. Journal of Experimental Psychology: General, 142, 1142–1158. doi:10.1037/a0030830

Merker, B., Morley, I., & Zuidema, W. (2015). Five fundamental constraints on theories of the origins of music. Philosophical Transactions of the Royal Society B, 370, 20140095. doi:10.1098/rstb.2014.0095

Minati, L., Rosazza, C., D’Incerti, L., Pietrocini, E., Valentini, L., Scaioli, V., … Bruzzone, M. (2009). Functional MRI/event-related potential study of sensory consonance and dissonance in musicians and nonmusicians. Neuroreport, 20, 87–92. doi:10.1097/WNR.0b013e32831af235

Patel, A. (2008). Music, language and the brain. Oxford, England: Oxford University Press.

Patel, A. (2014). The evolutionary biology of musical rhythm: Was Darwin wrong? PLoS Biology, 12, e1001821. doi:10.1371/journal.pbio.1001821

Patel, A. (in press). Why don’t songbirds use pitch to recognize tone sequences? The informational independence hypothesis. Comparative Cognition and Behavior Reviews.

Patel, A., Iversen, J., Bregman, M., & Schulz, I. (2009). Studying synchronization to a musical beat in nonhuman animals. Annals of the New York Academic of Sciences, 1169, 459–469. doi:10.1111/j.1749-6632.2009.04581.x

Perani, D., Saccuman, M., Scifo, P., Spada, D., Andreolli, G., Rovelli, R., … Koelsch, S. (2010). Functional specializations for music processing in the human newborn brain. Proceedings of the National Academy of Sciences, 107, 4758–4763. doi:10.1073/pnas.0909074107

Plantinga, J., & Trehub, S. (2014). Revisiting the innate preference for consonance. Journal of Experimental Psychology: Human Perception and Performance, 40, 40–49. doi:10.1037/a0033471

Plomp, R., & Levelt, W. (1965). Tonal consonance and critical bandwidth. The Journal of the Acoustical Society of America, 38, 548–560. doi:10.1121/1.1909741

Proverbio, M., & Orlandi, A. (2016). Instrument-specific effects of musical expertise on audiovisual processing (clarinet vs. violin). Music Perception, 33, 446–456. doi:10.1525/mp.2016.33.4.446

Ramus, F., Hauser, M., Miller, C., Morris, D., & Mehler, J. (2000). Language discrimination by human newborns and by cotton-top tamarin monkeys. Science, 288, 349–351. doi:10.1126/science.288.5464.349

Regnault, P., Bigand, E., & Besson, M. (2001). Different brain mechanisms mediate sensitivity to sensory consonance and harmonic context: Evidence from auditory event-related brain potentials. Journal of Cognitive Neuroscience, 13, 241–255. doi:10.1162/089892901564298

Schellenberg, E., & Trehub, S. (1994). Frequency ratios and the discrimination of pure tone sequences. Perception & Psychophysics, 56, 472–478. doi:10.3758/BF03206738

Schellenberg, E., & Trehub, S. (1996). Natural musical intervals: evidence from infant listeners. Psychological Science, 7, 272–277. doi:10.1111/j.1467-9280.1996.tb00373.x

Schön, D., Regnault, P., Ystad, S., & Besson, M. (2005). Sensory consonance: An ERP study. Music Perception, 23, 105–117. doi:10.1525/mp.2005.23.2.105

Schwartz, D., Howe, C., & Purves, D. (2003). The statistical structure of human speech sounds predicts musical universals. The Journal of Neuroscience, 23, 7160–7168.

Sugimoto, T., Kobayashi, H., Nobuyoshi, N., Kiriyama, Y., Takeshita, H., Nakamura T., & Hashiya, K. (2010). Preference for consonant music over dissonant music by an infant chimpanzee. Primates, 51, 7–12. doi:10.1007/s10329-009-0160-3

Terhardt, E. (1984). The concept of musical consonance: A link between music and psychoacoustics. Music Perception, 1, 276–295. doi:10.2307/40285261

Toro, J. M. (2016). Something old, something new: Combining mechanisms during language acquisition. Current Directions in Psychological Science, 25, 130–134. doi:10.1177/0963721416629645

Toro, J. M., & Hoeschele, M. (2017). Generalizing prosodic patterns by a non-vocal learning mammal. Animal Cognition, 20, 179–185. doi:10.1007/s10071-016-1036-8

Trainor, L., & Heinmiller, G. (1998). The development of evaluative responses to music: Infants prefer to listen to consonance over dissonance. Infant Behavior and Development, 21, 77– 88. doi:10.1016/S0163-6383(98)90055-8

Trainor, L., Tsang, C., & Cheung, V. (2002). Preference for sensory consonance in 2- and 4-month-old infants. Music Perception, 20, 187–194. doi:10.1525/mp.2002.20.2.187

Tramo, M., Cariani, P., Delgutte, B., & Braida, L. (2001). Neurobiological foundations for the theory of harmony in Western tonal music. Annals of the New York Academic of Sciences, 930, 92–116. doi:10.1111/j.1749-6632.2001.tb05727.x

Watanabe, S., Uozumi, M., & Tanaka, N. (2005). Discrimination of consonance and dissonance in Java sparrows. Behavioral Processes, 70, 203–208. doi:10.1016/j.beproc.2005.06.001

Zentner, M. R., & Kagan, J. (1998). Infants’ perception of consonance and dissonance in music. Infant Behavior and Development, 21, 483–492. doi:10.1016/S0163-6383(98)90021-2