Experimental Divergences in the Visual Cognition of Birds and Mammals

Experimental Divergences in the Visual Cognition of Birds and Mammals

Muhammad A. J. Qadri & Robert G. Cook

Department of Psychology, Tufts University

Reading Options:

Continue reading below, or:

Read/Download PDF | Add to Endnote

Abstract

The comparative analysis of visual cognition across classes of animals yields important information regarding underlying cognitive and neural mechanisms involved with this foundational aspect of behavior. Birds, and pigeons specifically, have been an important source and model for this comparison, especially in relation to mammals. During these investigations, an extensive number of experiments have found divergent results in how pigeons and humans process visual information. Four areas of these divergences are collected, reviewed, and analyzed. We examine the potential contribution and limitations of experimental, spatial, and attentional factors in the interpretation of these findings and their implications for mechanisms of visual cognition in birds and mammals. Recommendations are made to help advance these comparisons in service of understanding the general principles by which different classes and species generate representations of the visual world.

Keywords: Pigeons; Humans; Visual cognition; Spatial attention; Perceptual grouping; Perceptual completion; Visual illusions

Corresponding author:

Dr. Robert G. Cook Department of Psychology; Tufts University 490 Boston Ave.; Medford, MA 02155, USA; Phone: 617 627-2456, Fax: 617 627-3181; E-mail: Robert.Cook@tufts.edu

This research was supported by National Eye Institute grant EY022655.

Visual cognition is critical to the behavior of complex animals. It generates the working internal cognitive representations of the external world that guide action, orientation, and navigation. The extensive study of the human animal has dominated the theoretical and empirical investigations of vision and visual cognition (Palmer, 1999). In comparison, the psychological investigation of visual cognition in other animals has received far less attention. Not surprisingly, the examinations of nonhuman primates have been of most interest precisely because their visual system most closely resembles our own. Despite this focus on primates, there is a long and distinguished record of comparative research with non-primate species that has profoundly enhanced our understanding of vision and its underlying mechanisms (e.g., Hartline & Ratliff, 1957; Hubel & Wiesel, 1962; Lettvin, Maturana, McCulloch, & Pitts, 1959; Reichardt, 1987). An appreciation of the entire spectrum of visually driven cognitive systems and how vision is implemented in different nervous systems is key to a complete and general understanding of the evolution, operations, and functions of vision and its role in cognition and intelligent behavior (Cook, 2001; Cook, Qadri, & Keller, in press; Lazareva, Shimizu, & Wasserman, 2012; Marr, 1982).

One of the most fruitful investigations of these comparative questions has focused on the visual behavior of birds, especially in comparison to mammals (Cook, 2000, 2001; Zeigler & Bischof, 1993). There is no question of the importance of the visual modality for these highly mobile creatures. Beginning with their origins within the lineage of feathered theropod dinosaurs (Alonso, Milner, Ketcham, Cookson, & Rowe, 2004; Corwin, 2010; Lautenschlager, Witmer, Altangerel, & Rayfield, 2013; Sereno, 1999), birds have subsequently and rapidly evolved on a number of fronts, including pulmonary physiology, the development of endothermy, distinctive strategies for reproduction and growth, and their central neuroanatomy (Balanoff, Bever, & Norell, 2014; Xu et al., 2014). Over that time, birds have evolved central and visual systems that are well suited for high-speed flight within the restrictions of muscle-powered transport. While quite large relative to birds’ body size, the avian brain is still small compared to primates’ in absolute size. Given the computational complexity and problems associated with vision, the difficulties of building flexible and accurate optically based machine vision systems, and the considerable and large portions of the primate brain devoted to visual cognition, the small size and visual excellence of the avian brain presents an interesting challenge and scientific opportunity. Given their high visual functionality and small absolute brain size, birds provide an excellent model system for guiding the practical and efficient engineering of small visual prostheses, while simultaneously advancing our general theoretical understanding of visual cognition.

The ancestors of modern-day birds and mammals followed contrasting diurnal and nocturnal evolutionarily pathways during the Mesozoic era, and as a result, these two major classes of vertebrate have evolved to rely more heavily on structurally different portions of their nervous systems to mediate visually guided behavior (Cook et al., in press). Most likely because of their nocturnal origins, mammals have evolved solutions to the challenges of vision that developed into numerous lemnothalamic cortical mechanisms and areas that primarily mediate visual cognition (Felleman & Van Essen, 1991; Homman-Ludiye & Bourne, 2014; Kaas, 2013). In contrast, birds use a collothalamically dominant vision system, mediated by the tectum and related structures, to process visual information. From one perspective, birds may represent the evolutionary zenith of the animals that rely on this ancient primary ascending pathway for vision. The complementary pathway present in both animal classes, however, is still critical to visual function. The collothalamic pathway involving the superior colliculus and pulvinar have well established and important visual and attentional functions in mammals (Müller, Philiastides, & Newsome, 2005; Petersen, Robinson, & Morris, 1987; Robinson, 1972), while the visual Wulst in the avian lemnothalamic pathway may play similar roles in birds (Shimizu & Hodos, 1989). Given these differences in the relative weighting and possible functions of these different pathways for each class, the direct comparison of these two types of vision systems provides theoretically revealing comparative information regarding the implementation and role of general, specific, and alternative routes to representing and understanding the visual world (Marr, 1982).

Pigeons have been the dominant avian model and focus species for this comparison. Years of intensive study have resulted in this bird’s visual, cognitive, and neural systems being the best understood of any avian species (Cook, 2001; Honig & Fetterman, 1992; Spetch & Friedman, 2006a; Zeigler & Bischof, 1993). Because the study of visual cognition in mammals has been dominated by studies of humans, the outcomes of the laboratory studies of pigeons have naturally and frequently been compared with our own visual behavior. More important, the extensive theoretical concepts developed from research on human visual cognition have regularly served as a guide for developing investigations of avian visual cognition. Combined, these forces have produced an extensive number of studies in which these two contrasting vertebrate species have been tested with identical or highly similar visual stimuli.

What is the current status of this scientific comparison of pigeon and human visual cognition? Moreover, what similarities and differences have been established regarding how these different classes of animals solve the challenging problems of visually navigating and acting in an object-filled world? On one front, a number of similarities have been established. For example, humans and pigeons discriminate letters of the English alphabet in highly analogous ways, suggesting that shape processing across these species may share similarities (D. S. Blough, 1982; D. S. Blough & Blough, 1997). Looking more deeply at the mechanisms underlying such findings, the early processes responsible for dimensional grouping appear to share similar organizational principles, with their combination, use, and recognition of color, shape, and relative illumination operating in ways that appear comparable (Cook, 1992a, 1992b, 1993; Cook, Cavoto, Katz, & Cavoto, 1997; Cook, Cavoto, & Cavoto, 1996; Cook & Hagmann, 2012; Cook, Qadri, Kieres, & Commons-Miller, 2012). The investigation of visual search behavior has suggested that the search for targets in noise is governed by the same basic parameters across species (D. S. Blough, 1977, 1990, 1992, 1993; P. M. Blough, 1984, 1989; Cook & Qadri, 2013). Extensive research examining the pictorial discrimination of various objects derived from “geons” has suggested that pigeons and humans share commonalities in their processing of these stimuli as well (Kirkpatrick-Steger, Wasserman, & Biederman, 1996, 1998; Van Hamme, Wasserman, & Biederman, 1992; Wasserman & Biederman, 2012; Wasserman, Kirkpatrick-Steger, Van Hamme, & Biederman, 1993; Young, Peissig, Wasserman, & Biederman, 2001). These different parallels carry the important theoretical implication that the natural structure of the visual world may restrict the classes of computational solutions to a fairly small set of mechanisms, even across quite different biological visual systems. Thus, whether an animal is using a collothalamic- (birds) or lemnothalamic-dominant (mammals) visual system, they may operate using similar computational and processing principles because of the structure of the visual world (J. J. Gibson, 1979; Marr, 1982).

Despite the existence of these numerous experimental parallels, this “representational equivalence” hypothesis is surely not a comprehensive description. One might reasonably question given just the simple disparity in absolute size and internal organization of the brains of birds and mammals how these visual systems could function comparably. All of our additional cortical areas and tissue must allow us some enhanced functionality, such as mental imagery or manipulation. Consistent with this line of thinking, a number of experimental findings create problems for such a “representational equivalence” hypothesis. These findings include discrepancies, anomalies, or divergences in the apparent perceptual behaviors of these two species across many experiments. These divergences have not been just one or two isolated occurrences in a few limited settings, which may be overlooked, brushed aside, or dismissed. To the contrary, many occur in persistent clusters in theoretically relevant areas. The purpose of this article is to collect and evaluate lines of these divergent findings and their implications for theories of comparative visual cognition.

Conceptual Overview and Framework

There are a number of areas in which divergent findings have been reported involving pigeons and humans. Examining such divergences is an important way to evaluate the scope and limitations of representational equivalence and to identify potential functional differences. Precisely identifying and isolating where avian and mammalian visual systems differ and where they share commonalities is crucial to reverse engineering their computational mechanisms and evaluating all of the different possible alternative routes to visual representation.

The quintessential outcome of any one of these studies is that the perceptual responses of the pigeons fail to mimic those of humans (or vice versa depending on your taxonomic affection). For example, a number of psychophysical investigations have found that pigeons have poorer acuity and motion thresholds, lower flicker fusion thresholds, and differences in their processing of color relative to humans (Bischof, Reid, Wylie, & Spetch, 1999; P. M. Blough, 1971; Hendricks, 1966; Wright & Cumming, 1971). Beyond these differences in basic visual sensory function between pigeon and human, another difference is the pigeon’s strong propensity to attend to smaller local features or portions of stimuli rather than grasp the larger global form (Aust & Huber, 2001, 2003; K. K. Cavoto & Cook, 2001; Cerella, 1977, 1986; Emmerton & Renner, 2009; Kelly, Bischof, Wong-Wylie, & Spetch, 2001; Lea, Goto, Osthaus, & Ryan, 2006; Navon, 1977, 1981; Vallortigara, 2006). This same local precedence has also been evidenced to a certain degree outside of the operant chamber, during spatial cognition investigations of landmark use (Kelly, Spetch, & Heth, 1998; Spetch, 1995; Spetch & Edwards, 1988). While pigeons are able in the right circumstances to process global information, processing information at larger spatial scales seems far more difficult for them (Cook, Goto, & Brooks, 2005; Fremouw, Herbranson, & Shimp, 2002; Kelly et al., 2001). Such psychophysical and attentional differences are noteworthy and significant and likely play important roles in resolving some of the findings considered in more detail below.

To make this review manageable, however, we have restricted our considerations to four topics that have generated a larger corpus of divergent findings in the domain of visual cognition. Specifically, we look at the discrimination of different arrangements of line-based shapes, the grouping and integration of dot-based perceptual information, the perceptual completion of spatially separated information, and the perception of geometric visual illusions. These are selected because they each represent persistent areas of experimental divergences that have centered on processes that are fundamental to visual cognition theoretically.

The pivotal issue in each case centers around whether any dissimilarities between avian and primate perception reflect a true qualitative difference in how the two classes of animals visually perceive and process the world or instead reflect experimental artifacts or limitations that do not represent the true, underlying state of affairs. Despite the best intentions of the experimenters to nominally investigate the same question across these species by testing similar or identical stimuli, many different variables and procedural issues could potentially produce a given divergent result.

Some of these complicating issues may be related to the experimental or discriminative procedures involved with testing different species. Differences in visual angle, subject training, previous experience, or experimental instructions are all examples of this type of issue. For instance, humans are often explicitly instructed about what features are relevant during testing. In marked contrast, pigeons always have to discover the relevant visual features on their own based on differential reinforcement. Consequently, the two species may ultimately perceive or attend to different features or aspects of superficially identical displays. If the latter occurs, this naturally limits any implications for our deeper understanding of visual processing. To draw the strongest conclusions, both species must attend to the same features in the experimental displays.

Likewise, discrepancies may stem from other attentional or cognitive differences between these species. As mentioned, pigeons regularly exhibit a bias to attend to spatially local information in preference to more globally available information in visual discriminations. Humans contrastingly appear to be much more global in their allocation of attention. Because of its potential impact, a framework for thinking about how animals might spatially attend to stimuli is worth considering at this juncture.

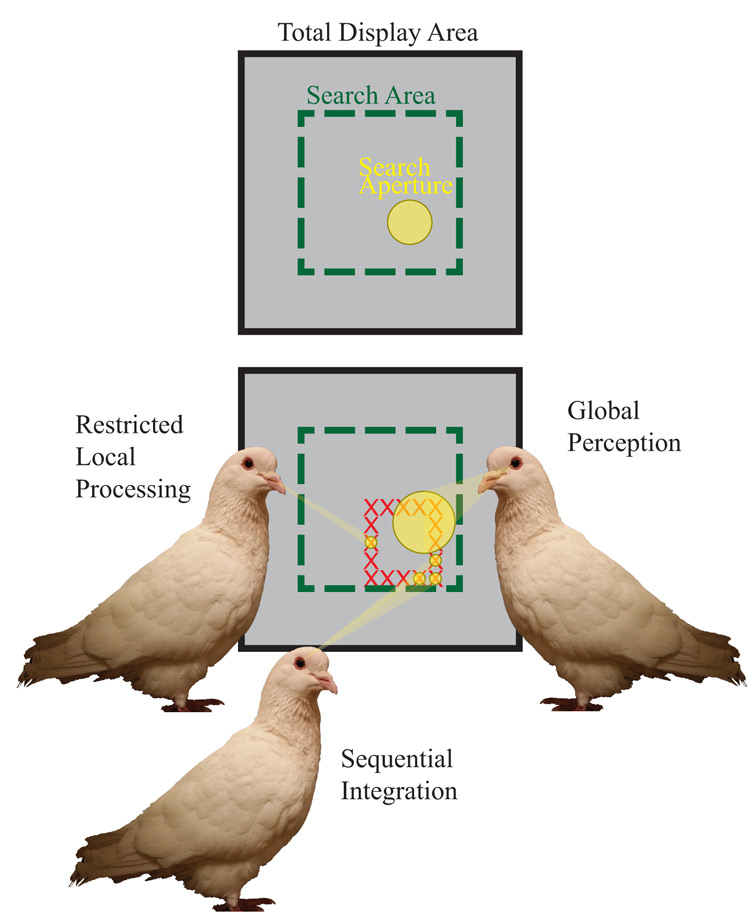

Figure 1 shows two important facets of spatially controlled attention. The first is the size of the area processed in a single visual scan. This might be best thought of as a visual “aperture,” an adjustable area, presumably circular, over which information is processed without any additional eye fixations. The second important component is the size of the spatial search area that is analyzed or integrated over using a series of successive fixations of this visual aperture around the display. This is also adjustable, and presumably operates over a larger region to integrate information. This search area could be as expansive as the whole operant chamber, or it could be limited to the regions of the display critical for correct discrimination. Both of these spatial components, search aperture and area, likely can vary independently, although a trade-off necessarily occurs between them. When a small visual aperture is employed, for example, greater scanning over a display may be strongly encouraged.

Figure 1. Depiction of the different hypothesized modes of spatial attention mechanisms and their critical features. We propose that pigeons use an attentional aperture over areas of the display to visually process information in the operant chamber. These distinctions yield two distinct types of global strategies: sequential integration and global perception. As depicted by the pigeon on the bottom, sequential integration applies a small attentional aperture to multiple spatial locations, integrating the information from each aperture to yield a global percept. The pigeon on the right depicts global perception, where a global feature is extracted from a single, large aperture. In contrast, the pigeon on the left applies a small aperture to only a single location, exemplifying a mode of (spatially) restricted local processing.

The combination of these two attentional attributes results in four modes by which information can be extracted from a visual display. One means of globally processing information over a spatial area would be to employ a large visual aperture and reduce the need for much successive scanning. This is much like what occurs in parallel search or perceptual grouping, especially in humans, where information is extracted or discriminated rapidly over a large spatial extent. A second way is to employ a smaller or more local visual aperture with numerous successive scans of a display that are then combined in later computations. This would yield a process similar to serial visual search and is a different way that animals could successively integrate information over a spatial extent. We think it is important to distinguish between these alternatives when thinking about their impact on global processing. We use the term global perception to indicate the use of a single, large spatial aperture, while the term sequential integration will be used to indicate the hybrid use of a smaller local aperture with a global scanning and integrating strategy. The third approach would be to use a smaller visual aperture with a spatially restricted scanning strategy, without integrating information from separate scans. This would lead to a more particulate perception of the display. We will use the term restricted local processing to describe this attentional approach, and to distinguish it from the sequential integration strategy that may have a similarly sized aperture, but a more expansive scanning strategy. The logical fourth alternative is one that combines a large visual aperture distributed widely over a large spatial area employing a large number of scans or fixations. This last method has similarities to how we experience and navigate the natural world. Given the restricted spatial scale of the typical operant setting, we think this mode plays a less prominent role in the findings below (but merits considerable more research attention). We think these different processing distinctions are worth keeping in mind when evaluating the results collected here.

Line-Based Shape Processing

Overall, the review is divided into four sections covering each broad topic area, followed by a discussion that integrates the interim conclusions of each section in service of answering the larger theoretical question of how avian and mammalian visual cognition are similar and different. This first section examines divergent findings involved with the processing of shape discriminations by pigeons. Because the motivations, stimuli, and tasks are different from each other, they do not easily form a shared theoretical focus. Thus, the possibility that we are combining different underlying phenomena by grouping them should be held in mind. They do share, perhaps importantly, the common feature of using stimuli comprised of different complex arrangements of line segments.

Stimulus Configuration

One important visual outcome in humans is a set of findings classified as configural superiority effects. Here, humans perform better when the arrangement or context of simple elements create configural or emergent properties that facilitate discrimination. The important theoretical idea captured by such results is that the emergent or holistic features of some stimuli precede or dominate the processing of their component elements (Kimchi & Bloch, 1998; Pomerantz, 2003; Pomerantz & Pristach, 1989).

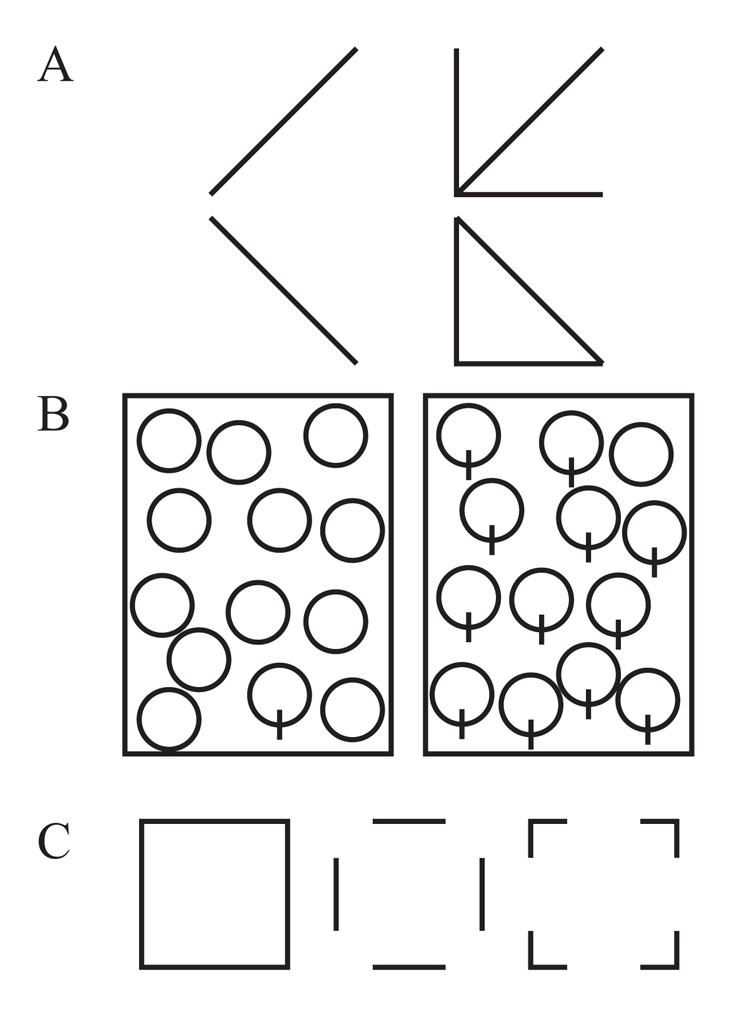

The classic example involves a simple discrimination of the diagonal tilt of two lines, as shown in Figure 2A. With the addition of a redundant “L” context to the tilted lines, this transforms into a discrimination of a “triangle” versus an “arrow,” facilitating performance in people (Pomerantz, Sager, & Stoever, 1977). Because of its importance to the visual mechanisms of holistic and analytic processing, this same type of visual phenomenon has been examined in pigeons.

D.S. Blough (1984) reported the first results testing configural-like stimuli with pigeons. He found mixed results in the two experiments briefly described in that chapter. Using a simultaneous discrimination and three highly experienced birds familiar with making letter discriminations, he reported the results of a discrimination with pattern-producing configural contexts. These consisted of a contextual L that produced an emergent “triangle” or “arrow” for rightward or leftward diagonal lines, or a “U” or “sideways U” as added to horizontal and vertical lines. Through a series of reacquisitions, the configural patterns were learned more quickly and responded to faster than the context-free line discriminations, suggesting that pigeons experience the same kind of configural superiority effect as humans. Subsequent investigations of this type of effect were not as encouraging, however.

In the same chapter, Blough also reported tests with line stimuli in which the added context resulted in a figure that formed a possible 3D object, as well as equally complex contexts that had no obvious 3D interpretation (see Figure 16.4 in Blough, 1984 for examples). For humans, the configural 3D figures were far easier to discriminate because they formed different global objects. For pigeons, this form of configural discrimination was reported as difficult to learn, regardless of the potential 3D interpretation. This suggests that the different line placements did not produce configural depth relations or objects in pigeons, or if they did, the resulting figures did not aid in the discriminability of the display.

In a more extensive investigation of this general issue with a larger number of pigeons, Donis and Heinemann (1993) also trained their animals to discriminate between rightward and leftward sloped diagonal lines in isolation or with the same addition of an L context (i.e., the classic “triangle” vs. “arrow”). In Donis and Heinemann’s study, however, the pigeons showed reduced accuracy when the discriminated lines were embedded in the configural L context. Unlike humans, the pigeons were more accurate when discriminating the lines in isolation. There are notable methodological differences that could have contributed to this difference from Blough’s brief description. Whereas the pigeons in D.S. Blough (1984) pecked directly at the correct stimulus, the pigeons in Donis and Heinemann had a subsequent spatial choice after pecking the discriminative stimulus. Also, the stimuli in Donis and Heinemann’s investigation were about four times larger than those in D.S. Blough (1984), raising the possibility that limits of processing might be related to the size of the stimuli. This latter possibility would suggest that Blough’s pigeons may have employed a global processing strategy, while Donis and Heinemann’s pigeons could have relied on sequential integration or restricted local processing. Donis and Heinemann’s results are not unique, however.

Kelly and Cook (2003) conducted three experiments with different groups of pigeons, examining the role of contextual information on the discrimination of diagonal lines and a mirror-reversed L discrimination. One group was tested in a target localization task using texture displays made from either repeated lines or configural patterns. The second group of pigeons was tested in an oddity-based same/different task. Similar to Donis and Heinemann (1993), the pigeons exhibited a reduction in target localization or same/different choice accuracy with the pattern-producing stimuli in comparison to the simple discrimination of diagonal lines. This was true across presentations using both small and large sizes of the stimuli, suggesting that visual angle was not particularly important. Furthermore, a second pair of configural stimuli involving a “positional discrimination” was also tested. Here the “featural” stimuli consisted of an L versus a mirror-reversed L, and the redundant context that permitted emergent features was a diagonal line (see Figure 1B in Kelly and Cook, 2003, for examples of these stimuli). This type of stimulus also showed no differences compared to the elemental and configural conditions in the same/different task, although it did reveal a configural superiority effect in the target localization task. This configural superiority effect may have been caused by the high similarity of the textured regions produced by the mirror-reversed elements. Nevertheless, in general, the pigeons in this study were typically better when the discriminative line stimuli were presented in isolation than in a configural organization.

A different configural effect found with humans involves stimuli using component line elements arranged to form a human face. Testing stimuli that produce a face superiority effect in humans, Donis, Chase, and Heinemann (2005) found that their pigeons’ capacity to discriminate the feature of a U (the ”smile”) versus its flipped counterpart ∩ (the “frown”) was impaired when a triplet of dots arranged as “eyes” and a “nose” was placed above it. The pigeons were further impaired when these features were enclosed within a larger ellipse, a condition in which humans see a clear and readily discriminable face. Thus, instead of promoting discrimination with the addition of these configuration-producing elements, for the pigeons, these additional features obscured the critical portion of the discrimination. Given that the pigeons failed to learn to discriminate these complete, configural displays even with extensive training, these authors suggested that the additional context increased the similarity between the configural stimuli for the pigeons instead of accentuating or producing new featural differences as it appears to in humans.

Thus, despite the promising start, the preponderance of the evidence suggests that pigeons tend not to exhibit the same configural superiority effects as observed in humans. As accessed by several experiments using different discrimination approaches, pigeons do not consistently benefit from the addition of contextual or configural information in these stimuli that humans find beneficial. Instead, the more typical result seems to be that the pigeons exhibit a form of configural inferiority effect, where the added elements interfere with discrimination by increasing the similarity of figures. This suggests that the two species are deriving or attending to different features within these stimuli.

Search Asymmetry

Another line of divergent research in this area is related to visual search asymmetries. In multiple investigations with humans, Treisman and various colleagues have found that not all sets of features produce identical modes of visual search (Treisman & Gormican, 1988; Treisman & Souther, 1985). In particular, Treisman and Souther found that some shapes could produce parallel search (like a single Q embedded in Os; see Figure 2B), while a reversal of the same features would result in serial-like search (a single O embedded in Qs). Treisman’s theoretical analysis of these search asymmetries focused on the fact that distinctive visual features were selectively activated for one type of search but not the other, such as detecting the presence of the singular line when a Q was the target in a set of Os.

Because of its theoretically revealing nature, Allan and Blough (1989) examined visual search in pigeons with variations of two types of search asymmetry stimuli previously established with humans. Their search displays included the search between O and Q and between triangles with and without a gap along one edge. Overall, they found no search differences depending on which line shapes were the target or distractors; the presence or absence of a feature in the target generally did not affect their search speed or accuracy for either the added line or gap stimuli. This divergence from humans—the apparent absence of a feature search asymmetry—is likely not due to pigeons being unable to exhibit such asymmetries in search tasks. Using search displays made up of groups of smaller squares of differing colors, Pearce and George (2003) found that pigeons did show asymmetries in accuracy when distinctive color features are located in the target rather than the distractors. This suggests that the divergence between humans and pigeons found by Allan and Blough may be specifically tied to the dimensional or featural processing of lines or shapes.

In later work, D. S. Blough (1993) examined the use of cues in stimuli that were square-like and contained an additional line and/or gap. On any given trial, an array of 32 stimuli in four rows was displayed, and the pigeon had to identify which stimulus within the array was unique. In this visual search task, Blough analyzed how reaction time varied according to the specific stimulus-pairs tested. He found that the pigeons appeared to independently attend to the presence of the additional lines in the different stimuli and to either location at the top or bottom of the shapes. The gap in the stimulus appeared not to capture attention, consistent with Allan and Blough’s (1989) earlier results. Importantly, the consistent and measurable within-stimulus effect highlights how even small differences in spatial attention directed toward different local areas of stimuli may be a potentially important concern in the analysis of stimuli.

Vertices and edges

For humans, one important outcome of studying visual cognition is our reliance on information at the junctions or vertices of objects for their recognition. Biederman (1987), for example, has found that the equivalent deletion of the vertices of an object is far more detrimental to its subsequent recognition by humans than the deletion of contour information in the middle of line segments (see Figure 2C). The analysis and prioritization of vertices as a critical feature also plays a classic and prominent role in object recognition algorithms by computers (e.g., Harris & Stephens, 1988; Trajković & Hedley, 1998). One possible reason for this reliance is that junctions contain greater information content to aid in deriving the non-accidental structural relations of an object’s surfaces as compared to edges. Focusing on vertices thus reduces the probability of accidental visual properties causing misperception of objects. It is natural to extend this question to whether pigeons use this feature in the recognition of objects.

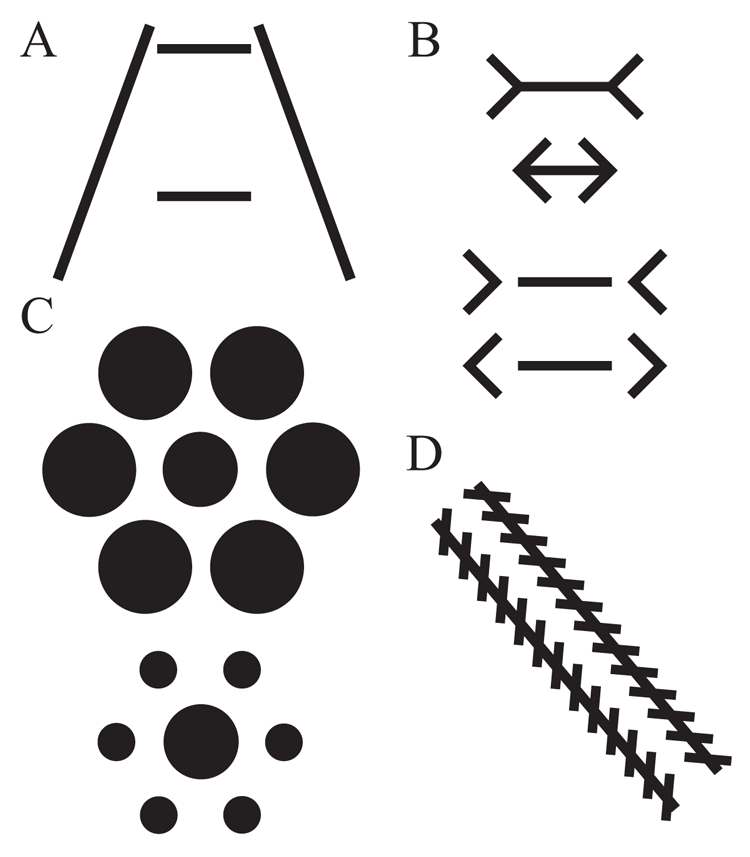

Figure 2. Examples of stimuli from different experiments focused on line-based figural processing. Panel A depicts a classic human configural superiority effect that has been tested with pigeons. Panel B shows a search asymmetry task in which the unique element contains an added line, which benefits humans in searching for the target, but not pigeons. Panel C depicts two-dimensional shape stimuli that have had either vertices/cotermination or line segments or edges removed.

Several investigations have suggested that pigeons might differ from humans in this regard. The earliest, most prominent, and most complete example was reported by Rilling, De Marse, and La Claire (1993). They trained pigeons to discriminate different shapes using both 2D (outlined square vs. triangle) and 3D (outlined cube vs. prism) figures. They then tested the pigeons by deleting different portions of the shapes at either the center of the lines or at their junctions. Across both sets of stimuli, the pigeons were more disrupted by the elimination of the line segments midway between the vertices than at the vertices. This outcome contrasts markedly with the typical human finding.

Several unpublished observations have since been consistent with Rilling et al.’s (1993) findings. An unpublished experiment from the Comparative Cognition Laboratory at the University of Iowa (Wasserman, personal communication) further suggested that vertices made less of a contribution to the recognition of geons by pigeons. Here segments were removed from complex line drawings of objects at the vertices and between the vertices. Similar to Rilling et al, the pigeons performed better in the former conditions. This outcome was complicated by additional differences between the conditions, however, as it was difficult to equate the length of the remaining line segments between these different conditions because of their complexity. In our own lab, we have found something similar in a target visual search task using texture stimuli. Cook (1993) reported that linear arrangements of distractors were more interfering than either randomized or spaced distractors in such a task (see examples of these stimuli in Figure 14.1 of Cook, 1993, p. 247). In subsequent unreported experiments, we found that edge-like linear distractors interfered more with target search than distractors made from the same number of elements but forming vertex-like right angles. This outcome hints that the edges of the square target regions were more critical to their identification by the pigeons than the corners.

Using a different approach, Peissig, Young, Wasserman, and Biederman (2005) examined how similarly pigeons process shaded complex objects and line drawings of the same objects. Using a variety of training and transfer designs, it appeared that the pigeons did not use the common edges or edge relations across shaded and line objects to mediate their discrimination, as their transfer was poor between these sets of stimuli. The results suggest instead that the pigeons used different representations of each group of stimuli, perhaps based on the availability of surface characteristics. Peissig et al. suggested that their pigeons may have placed greater importance on surface features than edges in learning these discriminations. Not all studies have found this type of result.

In contrast, a recent experiment by Gibson, Lazareva, Gosselin, Schyns, and Wasserman (2007) suggested instead that line co-termination in complex stimuli is an important factor for pigeons. In their experiment, pigeons were trained to discriminate four shaded geons in a choice task. They used a “bubbles” technique, where differing amounts of information were randomly visible through a set of Gaussian apertures placed over the stimuli. Accuracy was averaged across these varying amounts and locations of visible information to identify which portions of the images were most important to the pigeons’ discrimination. Statistical pixel-based analyses of the resulting classification images indicated that both pigeons and humans used pixels near vertices more than edges or surfaces. Visual inspection of the classification images for the individual pigeons, however, do suggest that line segment, edge, and surface information may have also made important contributions to each bird’s idiosyncratic solution. Nonetheless, this work provides the best evidence yet that the vertices or co-terminations of the objects carry more weight than edges for the pigeons.

Taken all together, these sundry lines of investigation based on various aspects of processing line-based stimuli suggest that pigeons do not always readily reproduce the same visual phenomena observed in humans. Pigeons frequently show configural effects that are different from or possibly opposite those in humans. Pigeons appear not to exhibit the same feature-based search asymmetries as humans with seemingly comparable shape-based stimuli. Finally, pigeons do not always consistently prioritize junctions and co-terminations in shape discriminations like humans. While each of these conclusions involves a different type of discrimination, the one common feature across these investigations is the discrimination of simple lines and their relations. As outlined previously, the key question is whether these findings are truly capturing a difference in processing or represent some kind of experimental by-product or artifact.

One possibility is that any emergent structures from simple lines have a greater meaningfulness to humans. Perhaps these more impoverished stimuli require some abstraction to recognize their relation or correspondence to real objects. Humans may have this capacity, but the pigeon visual system may require more complete and realistic stimuli to properly process elements and their configurations (B. R. Cavoto & Cook, 2006). One reason that Gibson et al.’s (2007) results might differ, for instance, is that their object stimuli were more complete and realistic because of the presence of surface shading information. Furthermore, in humans, these types of stimuli can take advantage of linguistic labels or our greater experience at reading with complex line shapes. Each of these experiential factors could provide an advantage in processing lines and their configurations. This line of reasoning would suggest that examining arrangements that are more naturally salient to pigeons (such as those related to food or mating) could reveal processing more similar to that in humans.

Alternatively, pigeons and humans could have simply focused on different aspects of the stimuli because of experiential, instructional, or other cognitive differences. In humans, global perception of the stimuli allows all of the display’s features to create new unitary forms that are easy to discriminate. This might not be the case for pigeons, where local details of the stimuli might dominate their perception. There are several ways that this difference could have manifested in these experiments. One possibility is the use of experimental conditions that do not promote the use of larger visual apertures or global perception strategies by the pigeons. This stems from the fact that the majority of the pigeon studies varied neither stimulus size nor stimulus location during training. Fixed-size and fixed-position procedures are likely the best conditions for supporting restricted local processing strategies. Additional concerns in the same vein can also be raised regarding the differences in visual angle of the stimuli experienced by both species. Furthermore, humans often receive explicit instructions as to what to attend to, whereas the pigeons do not. Thus, before concluding the theoretical question of whether pigeons visually process line information or employ features in shape processing differently than humans, it would be valuable to consider and alleviate the possibility that the results are artifacts of restricted attentional strategies, stimulus size, or instruction.

If we ignore these concerns for a moment, the pattern of results raises the possibility that humans and pigeons process line-based visual features in different fashions. The machine vision literature is replete with different ways to combine the visual features corresponding to the edges and vertices of objects, as well as other higher-level shape features, to generate representations of objects in space. If there are differences in the way these line-based shape features are processed, despite the apparent similarities in the behavior of pigeons and humans in many settings, it would give rise to questions about how such features coalesce into representations that produce similar actions (Pomerantz, 2003). This larger issue is returned to subsequently in the general discussion.

Dot-Based Perceptual Grouping

Moving beyond the “simpler” line stimuli of the first section, the next area examines more complex stimuli perhaps best placed under the heading of perceptual grouping. Broadly conceived, perceptual grouping involves grouping identical, disconnected local elements into larger, global configurations. Some investigations of grouping using humans and pigeons have shown similar or overlapping patterns of responding. For example, as investigated by texture segregation, the early perceptual grouping of color and shape has generally been found to be similar across the two species (Cook, 1992b, 1992c, 2000; Cook et al., 1997; Cook et al., 1996; Cook, Katz, & Blaisdell, 2012). Based on such evidence, we have suggested that early vision is organized along highly similar lines, perhaps because of the importance of determining the extent and relations of object surfaces. Nevertheless, there are several areas where pigeons have consistently deviated from humans with stimuli that presumably involve similar grouping processes. A place to start is with larger global stimuli built from localized smaller dots.

Glass Patterns

In an important study in this area, Kelly et al. (2001) examined how pigeons and humans process Glass patterns. Glass patterns are theoretically revealing stimuli created by taking randomly placed dots, offsetting them appropriately, and superimposing the transposed result on the original stimulus (Glass, 1969; see Figure 3A). Humans readily perceive the global organization of the resultant Glass patterns from these dotted dipoles. Furthermore, humans detect circular or radial Glass patterns through random noise more easily than either translational or spiral patterns (Kelly et al., 2001; Wilson & Wilkinson, 1998). A similar sensitivity to circular information has been found with gratings in nonhuman primates (Gallant, Braun, & Van Essen, 1993). It has been hypothesized that this particular pattern superiority is caused by specialized concentric form detectors that might be the precursors to cortical face processing (Wilson, Wilkinson, & Asaad, 1997).

Using these types of dotted displays, Kelly et al. (2001) trained pigeons to discriminate vertical, horizontal, circular, and radial Glass patterns from a comparable number of randomly placed dots. Besides being generally more difficult for the pigeons, they found no differences in accuracy across the different global patterns regardless of their organization. When different numbers of the dots were placed randomly, creating noise in the displays, the pigeons continued to exhibit equivalent performance among the display types, unlike humans who showed the typical benefits of circular-like patterns. Kelly et al. suggested that this difference between species was potentially driven by the neurological differences between avian and primate visual systems. Consistent with this analysis, we recently extended these findings to a new species of birds, starlings (Qadri & Cook, 2014). Using Glass patterns that duplicated those tested with pigeons, the starlings’ behavior was highly similar to the pigeons’ with no differences found among the patterns.

Biological Motion

Another important area of visual cognition research involves the study of biological motion (Johansson, 1973). Coordinated moving points or dots that correspond to the motions of different articulated behaviors, such as in point-light displays (PLDs), powerfully invoke the perception of acting agents in humans (see Figure 3B). Humans can recognize a variety of actions and socially relevant features (e.g., age, gender, emotion) from these coordinated motions (Blake & Shiffrar, 2007). As a result, the study of action in humans has historically relied on these form-impoverished displays because they isolate motion-related contributions to action recognition. Because of their dominance in the study of action in humans, attempts have been made to examine action recognition in animals using PLDs with varying degrees of success (Blake, 1993; J. Brown, Kaplan, Rogers, & Vallortigara, 2010; Oram & Perrett, 1994; Parron, Deruelle, & Fagot, 2007; Puce & Perrett, 2003; Tomonaga, 2001). These include several investigations testing birds (Dittrich, Lea, Barrett, & Gurr, 1998; Regolin, Tommasi, & Vallortigara, 2000; Troje & Aust, 2013; Vallortigara, Regolin, & Marconato, 2005).

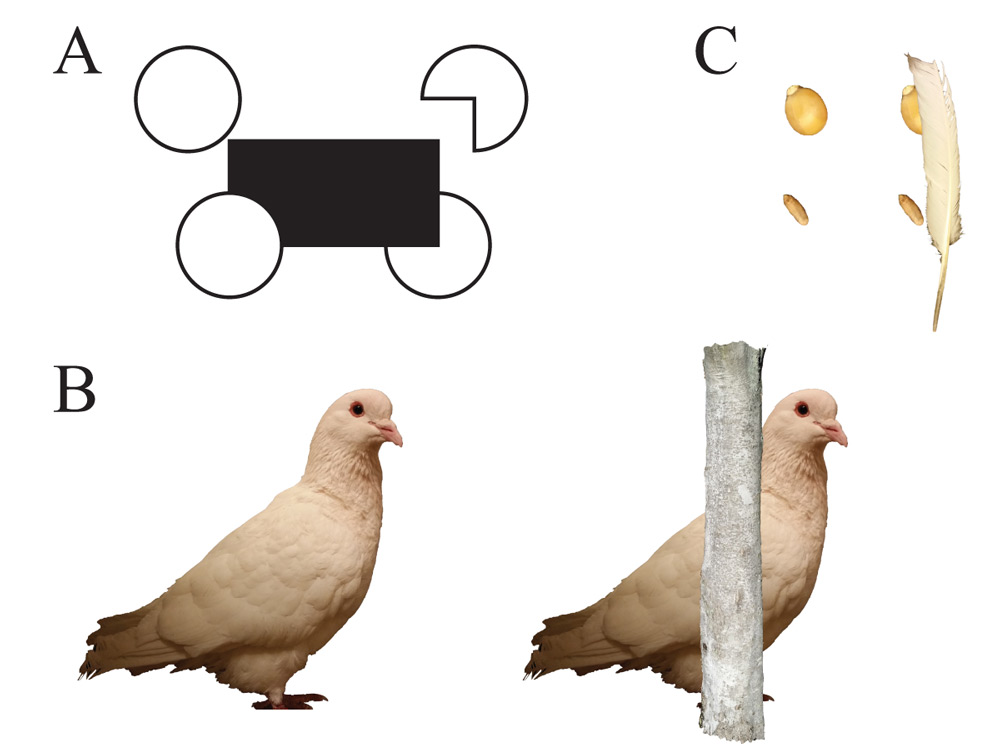

Figure 3. Examples of stimuli from experiments on dot-based perceptual grouping. Panel A depicts a Glass pattern (left) and a random pattern in the style of Kelly et al. (2001; right). Panel B depicts a subset of frames from a fully-rendered, background-included sequence of an animal running with the corresponding biological-motion pattern below it (Qadri, Asen, et al., 2014).

The earliest attempt to examine action perception by pigeons was conducted by Dittrich et al. (1998). They trained pigeons to discriminate between videos showing pigeons engaged in pecking and non-pecking behaviors using either full-figured, complete videos or PLDs. While pigeons trained on full-figured displays showed some transfer to PLDs, those trained with PLDs failed to show any transfer to full-figured displays. Differences in the background between the videos and PLDs, resulting from the recording settings needed to generate PLD videos, may have been a complicating factor that interfered with transfer of this action discrimination across the conditions.

That the processing of PLDs and full-figured complete displays is not equivalent is further supported by studies of action recognition using digital models (Asen & Cook, 2012; Qadri, Asen, & Cook, 2014; Qadri, Sayde, & Cook, 2014). After training pigeons to discriminate complete, digitally rendered animal models engaged in either walking or running actions (Asen & Cook, 2012), Qadri, Asen, and Cook (2014) found that the discrimination of these action categories did not transfer to PLD models that were built using the same articulated structure, motion, and background as the trained actions. This result persisted across differences in the size of the defining dots and changes in the overall visual angle of the display, both changes designed to promote the perceptual grouping of the separated dots.

Because of the problems associated with getting good transfer back and forth between complete models and PLD representations of the same actions, Troje and Aust (2013) instead trained pigeons to discriminate PLDs from the beginning of their experiment. Eight pigeons were trained to discriminate leftward from rightward walking in pigeon and human PLDs using a choice task. After learning the task, the pigeons were tested using globally and locally inconsistent displays. These stimulus analytic tests suggested six of the eight pigeons were attending primarily to the local motion of the dots to perform the discrimination, most often of the dots corresponding to the feet. Two pigeons were seemingly responding based on the overall global walking direction of the models. Thus, while the majority of pigeons seemed to locally process only a subset of the elements from these displays, a limited number of the pigeons did seem capable of attending and responding to the larger global organization of the motions. While this last investigation is perhaps promising, unlike with humans, the processing of PLDs does not appear to readily generate a global perceptual representation in pigeons of a behaving animal in the same way as full-featured videos of the same behavior.

Other studies have examined the perception of dot-based stimuli in motion using simpler patterns than these complex biological motion studies. For example, Nankoo, Madan, Spetch, and Wylie (2014) presented pigeons and humans with updating randomized dot patterns that created the impression of rotational, radial, or spiral motion. By updating only a subset of the stimuli across frames, they were able to vary the degree of motion coherence. Using a simultaneous choice task, both species discriminated an organized motion display from a randomized display. Humans were much better at the task than the pigeons, needing only 16–20% coherence to discriminate organized motion, while the pigeons needed more than 90% coherence to perform comparably. Furthermore, the species differed in their relative ease of discriminating the different types of motion. Humans were equally good with both rotational and radial motions, while the pigeons were best at discriminating rotational motion. Humans found the spiral motion displays most difficult to detect, while radial motion was poorest for the pigeons. Such results seem to suggest basic differences in the motion perception systems of these species.

The above experiments all suggest that when pigeons are required to group separated dotted elements into a global pattern, they have considerable difficulty doing so, or they process the displays in ways that differ from humans. Putting aside concerns about their naturalness, the pigeons did not perceive Glass patterns in ways that mimic humans. When dot arrays were placed in coordinated motion, as in studies of biological motion, the ready and apparent global perception of “behavior” from these dots is seemingly absent in the pigeons, unless perhaps specifically trained. When combined with their generally greater difficulty at detecting coordinated, dot-based motion, it seems reasonable at the current moment to conclude that neither static nor moving dots readily produce the same type of perceptual representation in pigeons as generated in humans. One possibility is that these stimuli are difficult to discriminate because they resist being perceptually grouped into larger configurations due to the spatial separation between the elements.

Again, it is necessary to raise the possibility that this difference originates in the established attentional bias that pigeons have against globally perceiving stimuli rather than a limitation on perceptual grouping. Sequential integration and restricted local processing strategies both would be specifically challenged by these types of dotted stimuli because their small identical components contain little local information to solve the task. The availability of information at these smaller levels (i.e., dipole spacing or orientation) may prevent the pigeons from seeing the larger patterns in these stimuli. The considerable appeal of the dotted stimuli in this section is that they require some analysis of extended areas for any level of discrimination. Somewhat surprisingly, the size and flexibility of pigeons’ visual or attentional aperture has not been experimentally determined, and its control mechanisms are poorly understood. This is a key oversight and an important area for future investigation. If the above difficulties with dotted stimuli are associated with spatial separation, then the next topic on perceptual grouping is likely directly related.

Perceptual Completion

The real world regularly requires the nervous system to make inferences about incomplete or overlapping information. For instance, when one object occludes a portion of another object behind it, as when an animal is moving behind the trees, humans effortlessly see one continuous and unified object over time. This human capacity toward figural completeness from perceptual fragments contributes importantly to our perception of a coherent visual world (Kellman & Shipley, 1991). Correctly connecting the separated edges and surfaces across such gaps makes this one of vision’s more challenging computational problems (Drori, Cohen-Or, & Yeshurun, 2003; Williams & Hanson, 1994; Williams & Jacobs, 1997; Zhang, Marszałek, Lazebnik, & Schmid, 2007). Because of its critical nature to understanding visual processing and the considerable number of anomalous results found with pigeons, the examination of perceptual completion has been recurrently investigated in this species.

One of the first studies to examine the issue of perceptual completion in pigeons was conducted by Cerella (1980). Using a shape discrimination task, pigeons were trained to peck at an outline of a triangle versus a set of other geometric shapes. After learning, the pigeons were then tested with partial triangles and ones in which increasingly more of the triangle was “covered” by a black “occluder.” Cerella found that as the occluder increasingly covered the S+ triangle, responding decreased. Interestingly, the partial figures supported more responding than the occluded condition. He suggested the possibility that this decreased responding was due to the pigeons not completing the invisible parts of the triangle behind the occluder, although neophobia to this new display element may have also been a factor. Subsequent studies in the same report had pigeons discriminate among Peanuts characters. These found that an occluded figure was responded to at levels similar to complete figures. The results also indicated that local features, rather than the entire figure, controlled the discrimination. As a result, any evidence for “completion” is reduced in that light.

Sekuler, Lee, and Shettleworth (1996) conducted a more complete and demanding test with pigeons using a different shape discrimination to index completion. Pigeons were first trained to respond differentially to full and partial circles (Pac-Man figures—partial circles with a 90° pie-piece removed; see Figure 4A). This training was conducted with a rectangle separated by a short distance from the Pac-Man figure. During the critical test, the partial circles were placed to appear as if they were a complete circle being partially occluded by the rectangle. The pigeons consistently responded as if they only saw an incomplete figure, and not completed inferred circles. The test was then repeated using an elongated ellipse partially occluding a rectangle, suspecting that completing a smaller area might be easier. The same result was found, with the pigeons reporting seeing “incomplete” figures. In some sense, however, the pigeons reported exactly what they had been trained do for the Pac-Man-like figure. It suggests that any good continuity potentially available across the edges of such stimuli was not sufficient to produce the same amodal perception of these figures as in humans.

Fujita and Ushitani (2005) examined the same fundamental question using a visual search procedure. Here, the pigeons were trained to visually search for a square red target with a small notch taken out of it among a set of distractors of complete squares. This training included preparation for the future occluder condition by having a white square in various nonadjacent positions around the target to familiarize the pigeons with its presence. When subsequently tested with new configurations of the elements, such that the notched target was adjacent to the white square, creating the perceptual possibility of appearing to be behind the occluder, the pigeons exhibited no accuracy or reaction time differences. This suggests that they did not see the critical configuration as forming a “complete” figure that would have instead impaired or slowed responding. Tests with humans using the same displays, on the other hand, confirmed that such conditions supported this perceptual inference as exhibited in slowed reaction times. Such failures to find evidence of completion engendered a number of experiments attempting to determine whether some additional factor was preventing the pigeons from evidencing perceptual completion.

One factor that has been examined is whether common motion might enhance the capacity of the pigeons to see complete stimuli. To help the birds better understand the demands of the task, Ushitani, Fujita, and Yamanaka (2001) had the fragmented elements of the display moving together in a synchronized fashion consistent with their potential connectedness. Following matching-to-sample training with moving elements that consisted of one object or two aligned objects in common motion, they then tested the pigeons with the occluded version of the stimuli. In the latter condition, however, the pigeons still reported perceiving two separated stimuli instead of a single completed one. Additional experiments with modifications of the moving stimuli designed to further enhance their potential perceptual unification did not alter this basic result.

Another concern that might be raised about these experiments is the relative naturalness or ecological validity of the stimuli tested in such completion experiments. As a result, several investigations have asked whether the use of more species-appropriate stimuli might produce perceptual completion. Watanabe and Furuya (1997) used a go/no-go task to test pigeons with televised images of full-colored conspecifics. They found that pigeons trained to detect the presence or absence of a pigeon behind an occluder showed no greater transfer to a complete image of the pigeon than a partial one. Their results suggest the birds were not seeing the partial images of the conspecifics used in training as “complete” pigeons. Shimizu (1998) also tested the perception of conspecifics by pigeons. In that study, Shimizu measured the elicited courtship behavior of male pigeons toward live and video-recorded female conspecifics. In one test of these experiments, they occluded either the upper or lower half of one of the videos. Their results suggested that the head, rather than the lower portions of the body contained the critical stimulus for generating courtship behavior, although neither was as effective as the complete stimulus. While not designed to test perceptual completion, these results are consistent with the hypothesis that pigeons do not see the partially occluded conspecifics as identical to complete ones.

Continuing in the same vein, Aust and Huber (2006) tested this issue using higher quality, photograph-based stimuli of pigeons. Pigeons were trained to discriminate between fragmented pictures of conspecifics in a go/no-go task. In these experiments, the occluder used in the training and test resembled a tree trunk (e.g., Figure 4B). After training, seven of the ten pigeons tested with occluded versions of the photos showed evidence suggestive of perceptual completion. Subsequent tests revealed, however, that that this outcome was a byproduct of the pigeons using simple visual features that correlated with complete and fragmented images during acquisition, suggesting that the initially promising results were not products of true completion. After further training, one pigeon was able to learn the discrimination independent of these secondary feature cues, but it responded to the critical occluded test exemplar as if it were an incomplete figure.

Figure 4. Examples of stimuli from experiments testing perceptual completion. Panel A shows a canonical setup, where the pigeons are trained with the circle and Pac-Man shape separated from the rectangle (top targets) and then tested with the circle and Pac-Man shape adjacent to the target (bottom targets; similar to Sekuler et al., 1996). Examples can be replicated using pictures of grain (Panel B) similar to (Ushitani & Fujita, 2005) and images of conspecifics (Panel C) with size and species-appropriate occluders similar to (Aust & Huber, 2006).

Ushitani and Fujita (2005) used a nonsocial approach to examine the potential contribution of ecological validity to perceptual completion. In this study, they trained pigeons to visually search and discriminate between small images of grains and non-grains (e.g., Figure 4C). After learning this task, the pigeons were tested with mixed displays having images of grains occluded by a feather or equivalent truncated or deleted photos of the grains mixed in. Perhaps not surprisingly, they found that the complete, unoccluded grains were selected first from the display. More critically, the images of truncated grains were selected before the occluded ones. If the pigeons had been seeing the occluded grains as completed objects, one might have expected them to be pecked off prior to the truncated ones. To rule out any neophobia of the occluder, they conducted further tests in which they familiarized the pigeons with the occluder before testing, but this made no difference in the order in which the occluded and truncated stimuli were selected. Thus, across these several different experiments, each attempting to increase the ecological validity of the stimuli in different ways, the pigeons revealed no better evidence of perceptual completion than the original demonstrations using more artificial stimuli.

Another approach to this general question is to use a discrimination where completion is not the direct source of responding, but inferred from a different type of outcome. Fujita (2001) investigated a line length estimation task to tap into the hypothesized completion process that occurs when a line meets an edge. For primates, this task indexes such completion by showing that there is a systematic error when a line abruptly ends at an adjacent figure. Humans judge such lines to be slightly longer than veridical measurement. This “illusory continuation” is presumably due to an inferred extrapolation of the line behind the adjacent figure. After training three pigeons to discriminate a range of lines as being either “short” or “long” in a choice task, Fujita found no similar continuation effect in the birds. If anything, they seemed to report the lines as longer when more distant from the adjacent figure.

Given the wide number of different stimuli, the different approaches involved, and the additional factors varied, the above results suggest that pigeons may not experience perceptual completion in the same way as primates. In fact, the pigeons frequently react quite literally, faithfully reporting exactly what is on the display regardless of its alternative perceptual possibilities. Whatever is going on here, it is certainly the case that this type of completion phenomenon is not easily produced in pigeons, unlike with human observers. Most of these tests have involved presumed “occlusion” by other stimuli in their testing procedure. The pigeon’s response to “occlusion” in other circumstances is not always so straightforward, however.

DiPietro, Wasserman, and Young (2002) tested the recognition of three-dimensionally depicted drawings of different objects by pigeons. In their test, pigeons had to discriminate which of four different objects had been presented. In the critical test, a familiar and adjacent brick wall–like occluder was then placed in different arrangements relative to a present object. When the occluder was placed in front of the objects, the pigeon’s recognition correspondingly decreased. If they had completed the objects then accuracy should not have decreased, but given the previous results, this reduction is not so surprising. They also included a novel control, however, in which the “occluder” was placed behind the object. Even though the objects were still fully visible, this condition also reduced the pigeon’s ability to recognize the objects. Further research showed that training with these conditions could reduce, but not eliminate, this “behind” interference effect (Lazareva, Wasserman, & Biederman, 2007).

We have observed similar results when we placed an occluder either in front or behind of a discriminative object (Koban & Cook, 2009; Qadri, Asen, et al., 2014). In both of these discriminations the pigeons had to discriminate among different kinds of moving stimuli. In the first case, these were different rotating 3D shapes, and in the second they were digital animal models performing different articulated actions. In both cases, we found similar interference effects to DiPietro, Wasserman, and Young (2002). When an occluder was simply placed behind the critical, and in our case moving, information, the pigeons showed a reduced capacity to perform the learned discrimination. The origins and conditions of this “behind” interference effect are yet to be determined. It appears that some type of masking or interference effect occurs when new edges or surfaces are created or added to previously learned depictions of objects. Other disparities between humans and pigeons have also been noted when pigeons have been asked to make explicit judgments of figure and ground in various types of complex images containing overlapping elements (Lazareva & Wasserman, 2012). Together these different interference results, like the various completion studies and the studies on feature use, indicate that the processing of lines and edges at the intersection and boundaries of multiple visual elements is not well understood in pigeons.

Not all of the results of investigations into completion are negative, however. Nagasaka and his colleagues have conducted three different experiments that they suggest indicate that pigeons can perceptually complete occluded and fragmented objects. In the first of these reports, Nagasaka, Hori, and Osada (2005) trained pigeons to discriminate the depth ordering of three lines that were arranged in the form of an “H.” On any trial, one of the two vertical bars was in front of the horizontal bar and one behind, but both were placed within the horizontal extent of the horizontal bar. The horizontal bar had a consistent and intermediate level of brightness, while the two vertical bars varied between black and a light gray. The depth ordering of the three bars could be changed by independently placing each of the vertical bars either in front of or behind the horizontal one. Four pigeons learned either to identify the nearer (unoccluded) or further (occluded) vertical bar (two birds each) by pecking at its location in the display.

The pigeons were then transferred to configurations that had the overlapped region between the vertical and horizontal bars varied in brightness to simulate transparency. To the human eye, the depth ordering of the bars could still be perceived with an apparent continuity of the upper and lower portions of each vertical bar. The pigeons’ responding to these stimuli was in accord with a transparency-conveyed depth ordering, suggesting that they were completing the bars. This highly interesting result critically rests on the previously untested assumption that pigeons perceived transparency in this context. A number of additional tests would have been interesting to further examine this claim. For example, could the pigeons have continued to perform the task if the upper and lower portions of the vertical bars were removed? A completion account suggests this would have been unlikely, if not impossible. Another test would have been to misalign or rotate the vertical bar segments to see how disruption of continuity affected accuracy. Finally, using varied gradients or textures to modulate the availability of simple edge relationships would have been an effective way to test how the local edge cues contributed to the overall discrimination. While we find this result intriguing for its profound implications for both the perception of completion and transparency in birds, we would like better evidence that the pigeons are not attending to some other set of cues to mediate this quite clever manipulation. This stimulating and important finding deserves wider investigation.

Nagasaka, Lazareva, and Wasserman (2007) reported another line of potentially positive results using a three-item choice task. Here the pigeons were trained in multiple stages to peck at a target shape that would be occluded by a darker adjacent shape. This was combined with comparison distractors that were either complete or incomplete versions of the target shape. They found that the errors to the different distractors were initially evenly divided, but with experience, errors gradually accumulated more frequently to the complete distractor than the incomplete one. Furthermore, the presence of a monocular perspective context cue to depth had no impact on this error rate. The authors suggest that this differential error rate to the distractors stems from the pigeons perceptually completing the occluded target shape, but other alternatives can account for these results. The most obvious alternative is that the pigeons learned a form of relative size discrimination, since the target shape and occluder always form the largest area, to which the complete distractor would be most similar. The authors spend considerable time attempting to rule this alternative out from post-hoc examinations of the data. While the overall effect is in the right direction, it is also clear that additional controls are needed before this study’s outcomes persuade.

Finally, Nagasaka and Wasserman (2008) used object motion in a highly original design to possibly capture evidence of perceptual completion in pigeons. In their first experiment, they trained four pigeons to choose one spatial choice alternative for a square moving in a circular trajectory and the other choice alternative when a set of four separated line segments moved in a synchronous pattern that looks very different. The segment-comprised pattern, however, has the appearance of what a complete, but occluded, square would look like if its vertices were being hidden behind a set of circles. After learning, they tested the pigeons with gray, circular occluders added to the displays, “covering” the vertices that were previously deleted in the training condition. Because of the design, if the pigeons were seeing the occluders as superimposed on a moving and complete square, they should have chosen that response alternative at high rates. However, in their first experiment, all four pigeons strongly responded as if they were seeing line segments instead. Three additional experiments involved further training and various improvements in the testing situations (increasing the occluder contrast, familiarizing the occluders, using a circular target form, filling the target form). In each experiment, one or two pigeons responded to the “complete” alternative at levels consistent with seeing the stimuli in that manner. However, a different combination of pigeons exhibited this “completion” result in each experiment, such that no single pigeon showed it consistently across all the experiments. Thus, a “completion” report by one bird in one experiment would disappear in the next, for example, despite changes in the displays designed to enhance the completion effect. This is a puzzling outcome. If the results were highly consistent across birds and experiments, this would be an excellent demonstration of perceptual completion in pigeons. In total, these various ingenious designs purported to have shown completion in pigeons deserve high marks for originality. They have produced the best evidence yet that pigeons might perceptually complete figures. That said, a number of reservations and additional conditions limit this evidence at the moment as providing proof that pigeons can perceptually complete or connect parts of occluded objects.

Taken together, the considerable number of experiments in this section all seem to point to one consistent and undeniable fact. It has just not been easy to get evidence of perceptual completion in pigeons. This easily produced phenomenon in humans is not readily reproduced in pigeons, despite numerous attempts with different and sometimes complicated approaches. The majority of experimental tests have produced negative results, while the few that seem more promising have reservations suggesting further research is needed. In the majority of cases, the pigeons either found alternative cues to the discrimination or accurately reported exactly what was being presented to them. While there have been varied attempts to address the issue of “naturalness” in several of these studies, the general concern over whether the pigeons globally perceive the displays remains a recurring issue. Much as with dot-based stimuli, however, these results on their surface suggest the pigeons may not have the processes needed to connect separated elements into larger configurations (but see Kirkpatrick, Wilkinson, & Johnston, 2007). Despite a natural world that seems to require the ability to complete occluded and disconnected edges and surfaces, this visual capacity remains an elusive phenomenon to elicit in the laboratory with pigeons.

Geometric Visual Illusions

Visual illusions are stable, non-veridical perceptions of the world by the visual system. Besides being fun to experience, these reliable misperceptions provide psychological insight into the contribution of the nervous system to the act of perception. The large number of identified illusions affecting human perception has contributed substantially to our understanding of the mechanisms of perception. Presumably, such illusory perceptions are the by-products of processes that have evolved over time to allow observers to effectively and quickly process the natural world, despite the lost fidelity when encountering the specific, often artificial, circumstances present in illusions.

Because of these considerations, the examination of visual illusions in animals has been of long-standing interest (Fujita, Nakamura, Sakai, Watanabe, & Ushitani, 2012; Malott, Malott, & Pokrzywinski, 1967; Révész, 1924; Warden & Baar, 1929). If animals experience visual illusions as we do, it would be good evidence that the underlying processes and representations are functionally the same, since illusions directly capture the influence and action of neural processes. If animals do not experience them as we do, it would suggest that different neural organizations are involved in their processing of the elements of these displays. Furthermore, these different mechanisms would be alternative solutions to the “visual problem” presumably addressed by the creation of illusions in the human visual system.

Likely because they are easy to create, geometric visual illusions have been the most common type of illusion examined in animals. In pigeons, four illusions have attracted the most attention. These are the Ponzo, Müller-Lyer, Ebbinghaus-Titchener, and Zöllner illusions. Examples of each of these four illusions can be seen in Figure 5. In each case, a basic psychophysical discrimination, such as a line length or circle size judgment, is tested with inducing contexts that shift or bias responding in humans, despite there being no requirement to use or consider the context when making the judgments. These illusions in humans nonetheless highlight the automatic context-dependence of such judgments. The story for pigeons is more complicated.

Figure 5. Examples of stimuli from experiments testing geometric illusions. Panel A illustrates the Ponzo illusion. Panel B depicts the Müller-Lyer illusion on top and the reverse Müller-Lyer illusion on the bottom. Panel C depicts the Ebbinghaus-Titchener illusion. Panel D shows an example of the Zöllner illusion.

Several well-designed studies have suggested that pigeons may share a common perception of the Ponzo illusion. In this illusion, the inducing context consists of two converging lines that alter the length judgment of a centrally positioned line (see Figure 5A). Fujita, Blough, and Blough (1991) found evidence that pigeons seem to experience this illusion in a similar manner as humans. Pigeons were trained to discriminate the length of a centralized horizontal line, making a choice to one alternative for three shorter lines and to the other choice alternative for the three longer lines (i.e., trained to categorize lines as “short” and “long”). To familiarize the pigeons with the surrounding context, this training was conducted with parallel lines in the surrounding context and with the target line placed at three different positions within this context (high, medium, and low). After learning the discrimination, the pigeons were tested with illusion-inducing contexts produced by making the irrelevant lines non-parallel and converging toward the top. This inducing context produced an asymmetric biasing effect, with a very large “long” effect on lines placed near the converging top of the context and a smaller, but consistent, “shorter” effect on lines placed near the bottom diverging end of the context. They also tested varying degrees of context-generated depth perspective, but this did not affect the pigeons’ responding. Thus, it appeared not to matter whether the inducing context portrayed “depth” or not; simply appearing convergent was sufficient. Follow-up experiments with additional pigeons found that this biasing effect was generally true over a variety of line lengths and different converging angles of the inducing context (Fujita, Blough, & Blough, 1993). The latter research also found that the gap between the inducing context and line made important contributions to the discrimination by the pigeons. Together, these systematic biases are consistent with the pigeons’ possibly experiencing the induction of a Ponzo-like illusion.

The Müller-Lyer illusion is another classic illusion investigated in pigeons. In this illusion, an inducing context of inward and outward facing “arrows” at the endpoints of a line segment alters the length judgment of the line (see top of Figure 5B). The results from different experiments have been mixed for this display. Malott et al. (1967) and Malott and Malott (1970) trained pigeons to respond to a horizontal bar with vertical end lines. When subsequently tested for generalization with inward or outward inducing arrows on lines of varying length, response rates changed for outward arrows consistent with the perception of the illusion. The inward arrows, however, appeared not to affect responding.