Imitating Sounds: A Cognitive Approach

Imitating Sounds: A Cognitive Approach

to Understanding Vocal Imitation

Eduardo Mercado III

University at Buffalo, The State University of New York

James T. Mantell

St. Mary’s College of Maryland

Peter Q. Pfordresher

University at Buffalo, The State University of New York

Reading Options:

Continue reading below, or:

Read/Download PDF | Add to Endnote

Abstract

Vocal imitation is often described as a specialized form of learning that facilitates social communication and that involves less cognitively sophisticated mechanisms than more “perceptually opaque” types of imitation. Here, we present an alternative perspective. Considering current evidence from adult mammals, we note that vocal imitation often does not lead to learning and can involve a wide range of cognitive processes. We further suggest that sound imitation capacities may have evolved in certain mammals, such as cetaceans and humans, to enhance both the perception of ongoing actions and the prediction of future events, rather than to facilitate mate attraction or the formation of social bonds. The ability of adults to voluntarily imitate sounds is better described as a cognitive skill than as a communicative learning mechanism. Sound imitation abilities are gradually acquired through practice and require the coordination of multiple perceptual-motor and cognitive mechanisms for representing and generating sounds. Understanding these mechanisms is critical to explaining why relatively few mammals are capable of flexibly imitating sounds, and why individuals vary in their ability to imitate sounds.

Keywords: mimicry; copying; social learning; singing; emulation; imitatible; convergence; imitativeness

Author Note: Preparation of this paper was made possible by NSF grant #SMA-1041755 to the Temporal Dynamics of Learning Center, an NSF Science of Learning Center and NSF grant #BCS-1256864. We thank Sean Green, Emma Greenspon, and Benjamin Chin for comments on an earlier version of this paper. Correspondence concerning this article should be addressed to Eduardo Mercado III, Department of Psychology, University at Buffalo, SUNY, Buffalo, NY, 14260. Email: emiii@buffalo.edu.

In his seminal text, Habitat and Instinct, Lloyd Morgan (1896, p. 166) describes two general kinds of imitation: instinctive imitation and intelligent or voluntary imitation. The examples he provides of intelligent imitation mostly involve reproducing sounds—a child copies words used by his companions, a mockingbird imitates the songs of 32 other bird species, a jay imitates the neighing of a horse, and so on. In fact, most of the examples of “imitation proper” that Morgan provides consist of birds reproducing the sounds of other species. Similarly, Romanes (1884) focuses almost exclusively on reports of birds imitating songs, music, and speech in his discussion of imitation. These classic portrayals of vocal reproductions as providing the best and clearest examples of imitation stand in stark contrast to current psychological discussions of imitation, which often classify examples such as those given by Romanes and Morgan as non-imitative performances that merely resemble actual imitation (Byrne, 2002; Heyes, 1996). When did phenomena that were once considered archetypal examples of voluntary imitation transform into a footnote of modern cognitive theories? Was some discovery made that fundamentally changed our scientific understanding of the processes underlying vocal imitation? Have psychologists or biologists succeeded in explaining what vocal imitation is and how it works to the point where little can be gained from further study? Or, have theoretical assumptions led scientists to underestimate the cognitive mechanisms required for an individual to be able to flexibly imitate sounds?

In the present article, we attempt to identify what exactly vocal imitation entails, and to assess whether current explanatory frameworks adequately account for this ability, including its apparent rarity among mammals. Past theoretical and empirical considerations of vocal imitation have often focused on the ability of birds to learn songs or reproduce speech (Kelley & Healy, 2010; Margoliash, 2002; Nottebohm & Liu, 2010; Pepperberg, 2010; Tchernichovski, Mitra, Lints, & Nottebohm, 2001), especially during development, or on the sophisticated ways in which birds interactively copy songs (Akcay, Tom, Campbell, & Beecher, 2013; Molles & Vehrencamp, 1999; J. J. Price & Yuan, 2011; Searcy, DuBois, Rivera-Caceres, & Nowicki, 2013). In contrast, vocal imitation by mammals other than humans has received little attention. When mammalian vocal imitation has been discussed, it typically has been described as a vocal learning mechanism, because of its presumed involvement in vocal repertoire development (Janik & Slater, 1997, 2000; Tyack, 2008). Although imitation clearly has an important role in learning, imitation by definition involves performance (via reproduction) and thus can exhibit varying degrees of success. Moreover, the effectiveness of imitation is itself an index of learning (e.g., we say a tennis player has reached expertise in serving when he or she can demonstrate the coordination exhibited by a professional). Thus, we argue here that vocal imitation by adult mammals is better viewed as performance of a learned skill, and that a closer examination of those species and individuals that have acquired this skill to a high degree can clarify the mechanisms that underlie vocal imitation abilities. Currently, the only mammals that have clearly demonstrated the ability to voluntarily imitate sounds are primates (particularly humans) and cetaceans (whales and dolphins). The main goals of this article are to reassess the available evidence on vocal imitation in these two groups and to provide new perspectives on how to better integrate future investigations of vocal imitation phenomena.

The paper is divided into six sections. In the first two sections, we consider alternate conceptualizations of vocal imitation (both historical and modern) that have different theoretical implications for the origin and role of vocal imitation. These alternate frameworks function as hypotheses against which we compare the literature summarized in subsequent sections. In section three, we discuss possible constraints on vocal imitation with respect to sounds that are imitatible by human and non-human primates, and also consider the degree to which the vocal motor system, as opposed to other motor systems, is attuned to the imitation of sound. Section four evaluates past reports that cetaceans, a group of mammals famous for their vocal flexibility, are capable of imitating sounds. Consideration of evidence from both primates and cetaceans leads to the proposal that sound imitation may serve a critical role for spatial perception and the coordination of actions (section five), in contrast to other accounts, which focus on its role in the development of social communication. Finally, in the sixth section we discuss possible mechanisms for vocal imitation, highlighting an existing computational model of speech learning and imitation that may provide an integrative theoretical framework for conceptualizing the representational mechanisms underlying the sound imitation abilities of mammals.

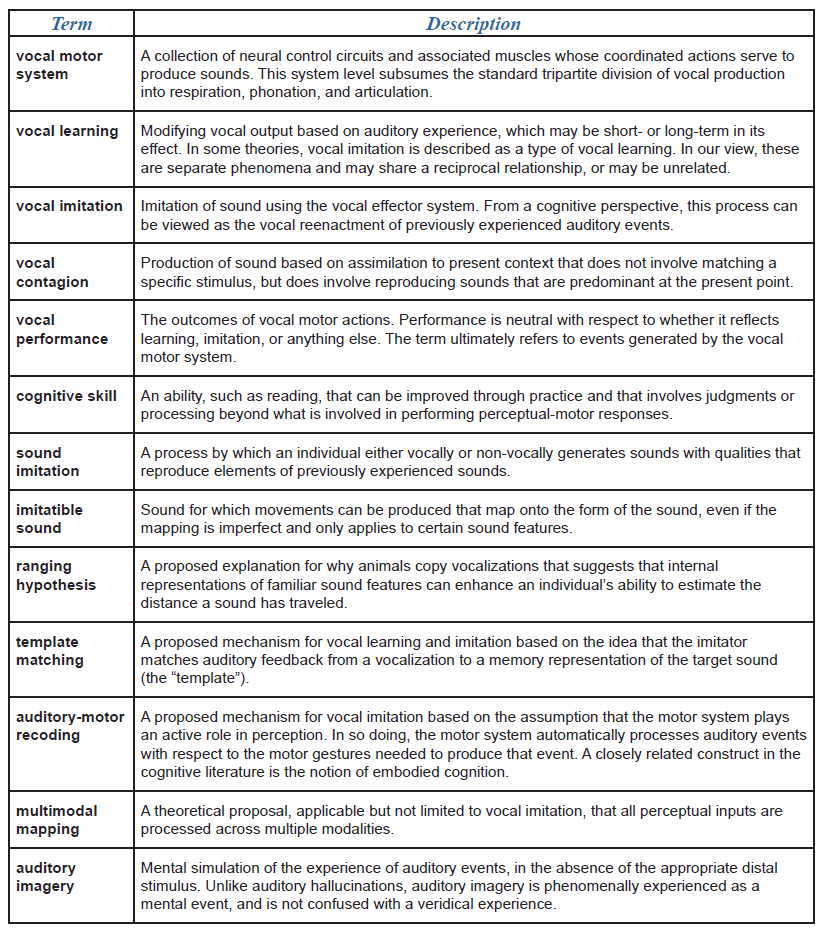

I. What Is Vocal Imitation?

Over the past century, researchers have used varying terminology to describe animals’ reproduction of sounds. In some cases, the same term has been used to describe different classes of phenomena. In others, different terms have been applied to the same phenomenon. For instance, the terms vocal mimicry and vocal copying have often been used as either synonyms for vocal imitation, or as a way to distinguish particular kinds of imitative or non-imitative vocal processes (Baylis, 1982; Morgan, 1896; Witchell, 1896). Marler (1976a) distinguished cases in which vocal production is modified as a result of auditory experience (vocal learning) from cases in which an individual produces sounds of a novel morphology by imitating previously experienced sounds (vocal imitation). Similarly, in their description of vocal developmental processes in bottlenose dolphins (Tursiops truncatus), McCowan and Reiss (1995) distinguished vocal learning, which they suggest occurs mainly during development, from vocal mimicry, which they describe as an imitative process that contributes to vocal learning (see Wickler, 2013, for a discussion of how the term mimicry might best be applied to sound production). To avoid potential confusion, we provide a glossary detailing our use of terminology (Table 1).

There is general consensus that vocal imitation must involve some attempt (intentional or incidental) to match an auditory event with the vocal motor system. The nature of this ability, however, has been a point of debate. Early on, Thorndike (1911) rejected the proposals of Morgan (1896) and Romanes (1884) that vocal reproductions by birds were examples of imitation. Thorndike seemed to believe that vocal imitation required less sophisticated mental capacities than other kinds of imitation. He claimed that the ability to copy sounds was a specialized capacity possessed by a few select bird species, and that, “we cannot . . . connect these phenomena with anything found in mammals or use them to advantage in a discussion of animal imitation” (1911, p. 77). Many researchers have subsequently endorsed Thorndike’s characterization of vocal imitation, either explicitly or implicitly (Byrne & Russon, 1998; Galef, 1988; Heyes, 1994; Shettleworth, 1998). For example, Tyack and Clark (2000) described the vocal imitation abilities of cetaceans as “the most unusual specialization in cetaceans.” In contrast, several experimental psychologists have argued that vocal imitation abilities are not specialized at all, but simply reflect basic mechanisms of conditioning (reviewed by Baer & Deguchi, 1985; Kymissis & Poulson, 1990). Some researchers describe vocal imitation as a specialized social learning mechanism that enables individuals to rapidly acquire new communicative signals (Bolhuis, Okanoya, & Scharff, 2010; Janik & Slater, 2000; Kelley & Healy, 2011; Sewall, 2012; Tyack, 2008), whereas others classify all instances of vocal reproduction as non-imitative phenomena (Byrne, 2002; Galef, 1988; Heyes, 1996; Zentall, 2006). In the following, we critically consider each of these approaches to explaining what vocal imitation is, noting their strengths and limitations.

Is Vocal Imitation an Outcome of Instrumental Conditioning?

Instrumental (operant) conditioning is a learning process in which the consequences of an action determine its future likelihood of occurring (Domjan, 2000; Immelmann & Beer, 1989). Miller and Dollard (1941) suggested that apparently copied actions (including vocal acts) might in some cases only match by coincidence, having been reinforced independently of any similarities in performance. In such situations, some apparent cases of vocal “imitation” can be viewed as an instance of instrumental conditioning, referred to as matched-dependent behavior. For example, one could train a dog to produce whining sounds whenever it hears the cries of a baby. The dog’s whines might be acoustically similar in certain respects to the baby’s cries, but these similarities are coincidental; the dog might just as easily have been trained to bark or to open a door whenever it heard the cries. Though the trained behavior may match the discriminative stimulus, it is not the degree of match per se that leads to reinforcement. Miller and Dollard distinguished matched-dependent behavior from copying, in which the presence of reinforcement is contingent on the successfulness of matching. Learning to sing a melody by matching the sounds produced by an instructor would be an example of this kind of vocal copying. The teacher uses feedback to reinforce correct matches and to punish mismatches. The main difference between matched-dependent behavior and copying in Miller and Dollard’s framework is that a copier directly compares his acts (or their outcomes) with those of a target to evaluate their similarity, such that the level of detected similarity becomes a cue controlling behavior. A commonality across matched-dependent behavior and copying is that changes in vocal behavior are described as reflecting reinforcement histories alone, and thus do not require any specialized learning mechanisms.

Miller and Dollard’s explanation for acts of vocal imitation (construed as copying or matched-dependent behavior) continues to be endorsed by some psychologists (Heyes, 1994, 1996). For instance, Heyes (1994, p. 224) suggested that, “copying is virtually synonymous with vocal imitation.” This interpretation of vocal imitation rests on four major assumptions: (1) a vocalizing individual initially produces sounds at random, after which a subset are rewarded; (2) all a vocalizing individual needs to be able to do to reproduce a sound is recognize similarities between produced sounds and previously perceived sounds; (3) mismatches between an internally stored model of a previously experienced sound and percepts of produced sounds drive instrumental conditioning see also the discussion of auditory template matching in section six); and (4) such mismatches correspond to errors in production. Like those of many previous researchers, the examples of vocal copying provided by Heyes focus mainly on song learning and speech reproduction by birds.

Mowrer (1952, 1960) similarly proposed that vocal imitation, even in humans, was a consequence of instrumental conditioning rather than a specialized ability. He suggested that for such conditioning to occur, a sound produced by a model initially had to be established as a secondary reinforcer by being associated with pleasant outcomes. Later, a “babbling” individual (e.g., a parrot or human infant) might occasionally make a similar sound. Assuming that the vocalizing individual generalized from its past experiences with the secondary reinforcer, hearing the self-produced sound would reinforce the immediately preceding vocal act. The more similar to the original sound the babbled sound was, the more reinforcing it should be, leading to a kind of autoshaping or successive approximation in which the vocalizing individual is differentially self-rewarded based on how closely it produces copies of the original sound. By this account, vocal imitation is simply an automatic, trial-and-error process that depends on initial rewards from another organism to establish certain sounds as secondary reinforcers. Mowrer thus describes vocal imitation phenomena as the result of latent learning about associations between sounds and rewards. He notes that sounds can only maintain their efficacy as secondary reinforcers if they are occasionally supplemented by external social reinforcements. Thus, as in Miller and Dollard’s (1941) explanation of copying, Mowrer claimed that feedback from a teacher is critical for vocal imitation to occur.

Baer and colleagues (1967) later showed that explicitly trained vocal imitation in children immediately generalized to novel sounds. They suggested that topographical similarity between a performed act and a perceived act could become a conditioned reinforcer, which could lead to generalized imitation across different stimuli (see also Garcia, Baer, & Firestone, 1971; Gewirtz & Stingle, 1968; Zentall & Akins, 2001). Their proposal parallels Miller and Dollard’s (1941) claim that recognition of similarity is a critical component of vocal copying, and again requires reinforcement of imitative vocal acts by a teacher. In Baer and colleagues’ generalized imitation framework, a vocalization is imitative if it occurs after a vocal act demonstrated by another individual, and if the form of the model’s vocalization determines the form of the copier’s vocalization. The proposal that generalized vocal imitation can be viewed as a consequence of operant conditioning has received some support from recent studies of the role of vocal imitation in speech development by children (Poulson, Kymissis, Reeve, Andreators, & Reeve, 1991; Poulson, Kyparissos, Andreatos, Kymissis, & Parnes, 2002).

Collectively, past theoretical analyses of vocal imitation by experimental psychologists have often focused on establishing that this phenomenon can be viewed as an outcome of instrumental conditioning with few if any unique characteristics. These accounts generally do not explain why vocal imitation abilities are absent in most mammals. Given that mammals are quite capable of being instrumentally conditioned, some researchers have suggested that the rarity of vocal imitation abilities in mammals reflects limitations in vocal control (Arriaga & Jarvis, 2013; Deacon, 1997; Fitch, 2010; Mowrer, 1960). However, this explanation remains speculative (Lieberman, 2012), and others have suggested that what is missing are mechanisms that make it possible for an organism to adaptively adjust existing vocal control mechanisms. For example, Moore (2004) hypothesized that an organism must possess specialized imitative learning mechanisms beyond those necessary for instrumental conditioning before vocal imitation becomes possible (see also Subiaul, 2010). Thus, Thorndike’s (1911, p. 77) view that vocal imitation abilities are “a specialization removed from the general course of mental development,” has resurged in recent years and is currently the dominant view among biologists studying vocal imitation.

Is Vocal Imitation a Specialized Type of Vocal Learning?

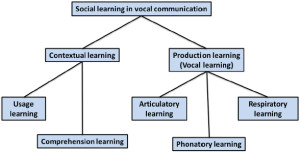

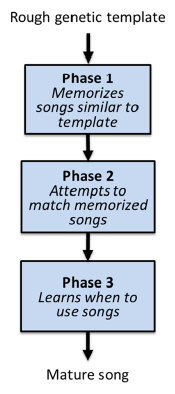

Figure 1. Taxonomy proposed by Janik and Slater (2000) in which vocal learning is distinguished from contextual learning and subtypes of vocal

learning are associated with different effectors. In this framework, vocal imitation of amplitude and duration features involves respiratory learning, imitation of frequency contours or pitch involves phonatory learning, and imitation of timbre involves articulatory learning.

As noted above, Marler (1976a) defined vocal learning as a process whereby vocal production is modified as a result of auditory experience. More recently, this term has been used to refer to any type of learning that involves vocal systems (Arriaga & Jarvis, 2013). Several reviews of vocal learning by mammals suggest that it represents a specialized form (or actually several different forms) of motor learning (Egnor & Hauser, 2004; Janik & Slater, 1997, 2000; Jarvis, 2013; Sewall, 2012; Tyack, 2008). Within modern vocal learning taxonomies, vocal imitation is often described as a particular type of vocal learning called vocal production learning (Fitch, 2010; Tyack, 2008) or production learning (Byrne, 2002; Janik & Slater, 2000). Vocal production learning, defined as the ability to modify features of sounds based on auditory inputs, has been distinguished from contextual learning, which is said to consist of learning how to use or comprehend sounds (Figure 1). Janik and Slater (2000) divided vocal production learning into three “forms” depending on which articulators were thought to be involved. Tyack (2008) identified over a dozen forms based on how animals used the sounds (e.g., vocal production learning involving sounds used for echolocation). The three kinds of vocal production learning proposed by Janik and Slater correspond to acoustic features controlled by the vocalizing individual, including: (1) duration and amplitude; (2) pitch or frequency modulation; and (3) relative energy distribution or timbre. They hypothesize that modifying the duration and amplitude of a sound represents the simplest form of vocal learning (because these features depend mainly on respiratory control), and that modifying frequency components through control of vocal systems requires more advanced mechanisms (Janik & Slater, 1997, 2000). They also suggest that, because of its rarity, the ability to copy (i.e., imitate) novel sounds is the most advanced form of vocal learning.

A simple way of thinking about the distinction between contextual learning and production learning proposed by Janik and Slater (2000) is that contextual learning determines when animals produce the sounds they know how to make, whereas production learning determines what sounds they know how to make. For instance, situations in which animals respond to hearing certain sounds by producing similar sounds (e.g., dogs that bark when they hear barking or infants that cry when they hear crying) would not qualify as vocal imitation or vocal learning by these criteria (Andrew, 1962). These cases would meet the criteria for contextual learning, however, because sound usage is context dependent. Such phenomena are typically referred to as instances of vocal contagion (Piaget, 1962).

Byrne (2002), following the terminology proposed by Janik and Slater (2000), describes instances of vocal contagion as a kind of contextual learning1 in which heard sounds prime particular vocal acts, a process that he refers to as response facilitation. He describes vocal production learning as a potentially more interesting case because it includes the generation of new vocal acts and therefore requires more than just response facilitation. Nevertheless, he suggests that such vocal acts are not imitative, because in some cases only the outcomes of the actions are reproduced (see also Morgan, 1896). For instance, a mynah bird reproducing speech sounds cannot replicate the speech acts of a human, because the bird does not use the same vocal organs to produce sounds (however, see Beckers, Nelson, & Suthers, 2004; Patterson & Pepperberg, 1994).

Vocal imitation of novel sounds often has been touted as the clearest evidence of vocal production learning (Fitch, 2010; Janik, 2000; Tyack, 2008). The basic logic underlying past emphasis on the imitation of novel sounds is that if a vocalization is not novel, then one cannot be sure that imitation actually occurred. The origins of this criterion can be traced to Thorpe (1956, p. 135), who proposed that, “By true imitation is meant the copying of a novel or otherwise improbable act or utterance, or some act for which there is clearly no instinctive tendency.” Herman (1980) was one of the first to suggest that copying novel sounds requires more sophisticated cognitive mechanisms than modifying features of existing vocalizations (see also Baylis, 1982). He noted that many mammalian species can be trained to adjust their existing vocalizations into new forms or usage patterns (Adret, 1993; Johnson, 1912; Koda, Oyakawa, Kato, & Masataka, 2007; Molliver, 1963; Myers, Horel, & Pennypacker, 1965; Salzinger, 1993; Salzinger & Waller, 1962; Schusterman, 2008; Schusterman & Feinstein, 1965; Shapiro & Slater, 2004), whereas few species show any ability or inclination to copy novel sounds. Hearing individuals vocalize in ways that resemble the vocalizations of other species forcefully suggests that one has witnessed an imitative act. However, as originally noted by both James (1890) and Thorndike (1911), observations of an organism producing a novel action that resembles human actions, however precisely, does not provide strong evidence that the organism is imitating. Conversely, the fact that production of familiar vocalizations can potentially be attributed to mechanisms other than imitation does not provide strong evidence that those vocalizations are not truly imitative. Such ambiguities severely limit the usefulness of current taxonomical approaches for describing and understanding vocal imitation processes.

Limitations of the Vocal Learning Framework

Problems with defining vocal imitation. Past emphasis on specifying criteria for reliably identifying instances of vocal imitation have led researchers to focus almost exclusively on situations in which similarities between a produced sound and other environmental sounds seem unlikely to have occurred by chance (e.g., when the sound is novel and acoustically complex). However, the fact that human observers perceive an animal’s vocalizations as strikingly similar to a salient environmental sound (e.g., electronic sounds, speech, or melodies), either through subjective impressions or quantitative acoustic analyses, is no more evidence that vocal imitation has occurred than the fact that certain photos of the surface of Mars look like a face is evidence that aliens reconfigured the landscape into that shape. Videos showing examples of cats and dogs producing vocalizations that are aurally comparable to the phrase, “I love you,” are now commonplace, and yet few if any scientists would view these as evidence that these pets are imitating human speech. This is because many different mechanisms can lead to the production of atypical sounds. A novel vocalization might be a seldom-used part of an individual’s repertoire, the result of some combination of previously learned vocal acts, or an aberration resulting from atypical genetics, diseases, or neural deficits. If an elephant produces a sound that resembles that of trucks (Poole, Tyack, Stoeger-Horwath, & Watwood, 2005) or speech (Stoeger et al., 2012), it remains possible that these sounds are ones that a small number of elephants infrequently make, independently of whether they have ever heard trucks or speech. Alternatively, vocalizations may have been modified through differential reinforcement to more closely resemble those of environmental sounds (which would represent a case of contextual learning using the taxonomy shown in Figure 1). Consequently, the novelty criterion does not reliably differentiate vocal imitation, vocal learning, or contextual learning.

In contrast, human research benefits from experimenters’ ability to explicitly instruct human participants to intentionally imitate sound sequences (e.g., Mantell & Pfordresher, 2013). In these experiments, researchers assume that participants are following instructions and earnestly attempting to imitate sounds. This assumption implies that both accurate and inaccurate reproductions of any sound sequence (novel or familiar) are viewed as valid attempts at vocal imitation. A second branch of human vocal imitation research exploits the tendency for human speech patterns to align with (become more similar to) previously experienced speech stimuli (e.g., Goldinger, 1998). In these studies, experimenters instruct their subjects to perform a vocal task such as word naming without actually telling them to imitate sounds. Researchers assume that when an individual produces speech with features similar to those produced by a speaker s(he) has been recently exposed to, then this performance is indicative of spontaneous vocal imitation. Human vocal imitation research thus uses contextual factors as criteria for identifying imitative acts rather than idiosyncratic features of vocalizations.

A second criterion that has occasionally been used to exclude vocal performances from involving imitation is that an action (vocal or otherwise) can only be considered imitative if the specific movements of a model are replicated (e.g., Byrne, 2002). An oddity of this criterion is that if a dolphin were to copy sounds produced by a sea lion, then this would not count as imitation, because dolphins and sea lions have different sound producing organs. However, if a second dolphin copied the first dolphin’s “barking,” then this would count as imitation, because the imitator shares the same vocal organs as the model, and thus would likely replicate the sound producing movements of the model (see Wickler, 2013, for a more extensive critique of such distinctions). The logical consequence of this exclusionary criterion is that cross-species vocal imitation is impossible, because “imitation” requires identical physiological production constraints. However, defining imitation in this way does little to clarify what humans and other animals are doing when they seem to be copying sounds they have heard. Furthermore, it creates a false dichotomy between vocal imitation and other more visible forms of sound imitation (e.g., imitating a percussive rhythm).

Problems with equating learning and performance. A basic assumption underlying the claim that imitation of novel sounds provides the clearest evidence of vocal production learning is that, because the organism is producing an “otherwise improbable” sound that it has not been observed to produce before, it must have gained the ability to do so through its auditory experience (i.e., it must have learned how to produce the sound by hearing it). It is clear, however, that the individual imitating the sound had the vocal control mechanisms necessary for producing the novel sound prior to ever hearing that sound. Hearing the novel sound merely set the occasion for the individual to express an already present capacity. By analogy, a person who has never seen a motorcycle before and sits on one for the first time does not spontaneously acquire the motor control needed to sit on a motorcycle simply by seeing someone else sit on one. Instead, the person generalizes existing sitting skills to a novel object. Similarly, an organism that copies features of a novel sound it has heard is applying existing vocal production skills to a novel auditory object. For example, upon hearing a tugboat horn, a child may successfully reproduce the long, low, shifting, spectrotemporal pattern on her first try, at least in relative pitch terms. There is no reason to think that experience with an unfamiliar percept somehow endows an observer with previously unavailable motor control abilities (Galef, 2013). Consequently, reproduction of novel sounds does not provide clear evidence of vocal production learning, and such performances actually might be better viewed as evidence of contextual learning, because it is the context that determines when the individual reproduces a novel sound.

It is important to note that psychologists’ use of the term learning differs from how this term is used colloquially and by biologists in that psychologists view learning as a long-lasting change in the mechanisms of behavior that results from past experiences with particular stimuli and responses (Domjan, 2000). In contrast, biologists’ definition of learning as “behavioral changes effected by experience” (Immelmann & Beer, 1989), makes no distinction between short- and long-term changes, emphasizes changes in actions rather than changes in mechanisms, and makes no attempt to specify why an action changed. Psychologists do not consider changes in behavior as strong evidence of learning, because many experiences such as fatigue, hunger, pain, injury, motivation, and drunkenness can also produce changes in behavior. Furthermore, numerous experiments have shown that learning can occur without any overt changes in an organism’s behavior. For these reasons, experimental psychologists have drawn a distinction between learning and performance—performance refers to what organisms do. How an organism performs often reflects past learning, but there is not a one-to-one mapping between performance and learning. Because biologists do not make a similar distinction, some phenomena they might classify as vocal learning do not meet psychologists’ criteria for learning. In the following, we use the term learning in the psychological sense, but use the phrase “vocal learning” in the sense preferred by biologists (see Table 1).

From a psychological perspective, production of novel sounds provides no evidence that learning has occurred. In fact, the learning that enables an individual to reproduce a particular sound may occur long before the novel sound is actually produced. This is not to say that vocal imitation of familiar or novel sounds never plays a role in vocal learning. Certainly, copying of sounds can afford many opportunities for learning that would not otherwise be available, especially in young children. Nevertheless, it is important to recognize that not only does vocal learning occur in the absence of vocal imitation (reviewed by Schusterman, 2008), but vocal imitation can also occur without involving any new learning. These facts are clearly problematic for any taxonomy that defines vocal imitation as a kind of learning.

Synthesis

The two approaches to explaining vocal imitation described above—defining vocal imitation either as an outcome of instrumental conditioning or as a kind of learning (the vocal learning framework)—parallel more general frameworks for describing imitation. For instance, in evaluating strategies for defining imitation, Heyes (1996) identified three basic solutions: the essentialist solution, the positivist solution, and the realist solution. The essentialist solution is a definition-by-exclusion strategy in which researchers classify different imitation-like phenomena using specific criteria in an attempt to identify what is truly an imitative act. The vocal learning taxonomical framework is an example of this strategy. Limitations of this approach are that classifications are only as good as the demarcation criteria that are developed, and that defining vocal imitation by exclusionary criteria does not clarify what vocal imitation actually entails. The positivist solution involves selecting an operational definition for what will be called vocal imitation. The instrumental conditioning framework qualifies as this type of strategy because it focuses less on differentiating vocally imitative acts from other acts, and more on identifying the conditions that lead to vocal reproductions. Finally, there is the realist solution, which focuses on explaining behavior in terms of theories about mental processes that yield testable hypotheses. Cognitive accounts of vocal imitation by adult humans exemplify this approach.

II. Vocal Imitation Is a Cognitive Process

One reason that vocal imitation often has been described as a learning mechanism is that comparative studies have focused on its role in vocal development (Baer, Peterson, & Sherman, 1967; Marler, 1970; McCowan & Reiss, 1997; Mowrer, 1952; Nottebohm & Liu, 2010; Subiaul, Anderson, Brandt, & Elkins, 2012; Tyack & Sayigh, 1997). In particular, there have been extensive comparisons between song learning by birds and speech learning by humans (Bolhuis et al., 2010; Doupe & Kuhl, 1999; Jarvis, 2004, 2013; Lipkind et al., 2013; Marler, 1970)2. From this perspective, vocal imitation provides a way for naïve youngsters to acquire the communicative abilities of mature adults. In fact, some researchers have argued that copying of sounds outside the natural repertoire may be a functionless evolutionary artifact (Garamszegi, Eens, Pavlova, Aviles, & Moller, 2007; Lachlan & Slater, 1999). Although vocal imitation abilities can be an important component of vocal development, the most versatile vocal imitators are adult humans (Amin, Marziliano, & German, 2012; Majewski & Staroniewicz, 2011; Revis, De Looze, & Giovanni, 2013). Furthermore, humans invariably achieve expertise in vocal imitation abilities well after learning to produce speech sounds. The most capable human vocal imitators perform copying feats that few adults can replicate. One could even argue that highly developed communication skills are a prerequisite for the highest levels of proficiency in vocal imitation, because professional imitators (e.g., impersonators, actors, singers) often receive detailed verbal feedback from instructors and peers over several years.

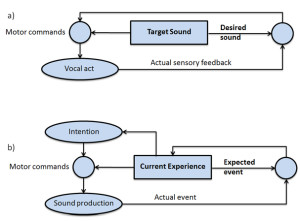

Viewing Vocal Imitation as a Component of Auditory Cognition

Cognitive psychologists’ conceptualization of vocal imitation by adult humans differs dramatically from that proposed by biologists and comparative psychologists for vocal imitation by non-humans. In particular, the emphasis in cognitive studies of vocal imitation is on how sounds and vocal acts are perceived, how links between percepts and actions contribute to performance, and how mental representations of events contribute to these processes. From this perspective, studies of vocal imitation in adults can be viewed as part of the field of auditory cognition, which focuses on understanding how mental representations and cognitive processes enable the understanding and use of sound.

In some respects, the cognitive approach to describing vocal imitation represents a return to Morgan’s (1896) portrayal of imitation. Recall that Morgan divided imitation into two types: instinctive and voluntary. As an example of instinctive vocal imitation, he described a scene in which a chick comes across a dead bee and gives an alarm call, which leads a second nearby chick to give a similar alarm call. Today, the latter part of this scenario would be described as a case of vocal contagion. Morgan contrasted this kind of reflexive vocal matching with voluntary imitation, which he also refers to as conscious, intentional, or intelligent imitation. He noted that voluntary imitation is not independent of instinctive imitation, but rather builds on it (see also Romanes, 1884). Notably, frameworks that describe vocal imitation as either instrumental conditioning or vocal learning make no distinction between reflexive and voluntary imitation. This distinction is common in cognitive studies of human vocal imitation, however, and has recently also been revisited in discussions of motor imitation. For example, Heyes (2011) distinguishes between two “radically different” types of imitation: a complex, intentional type that individuals can use to acquire novel behaviors (voluntary imitation), and a simple, involuntary variety that involves duplicating familiar actions (referred to as automatic imitation). Cognitive psychologists have also drawn a distinction between overt imitative acts, which involve the observable, physical reproduction of sound, and covert imitation, which involves the unobservable, mental, or subvocal reproduction of sounds or actions (Pickering & Garrod, 2006; Wilson & Knoblich, 2005). These distinctions have important implications for understanding what vocal imitation is, and for identifying the cognitive processes that make vocal imitation possible.

Automatic Imitation Suggests Vocal Imitation Frequently Goes Unnoticed

Automatic vocal imitation has been studied extensively by speech researchers and has been observed at multiple levels of processing, including syntactic, prosodic, and lexical alignment in conversation (Garrod & Pickering, 2009; Gregory & Webster, 1996; Levelt & Kelter, 1982; Neumann & Strack, 2000; Pickering & Branigan, 1999; Shockley, Richardson, & Dale, 2009). Automatic imitation is modulated by social factors such as gender (Namy, Nygaard, & Sauerteig, 2002), personal closeness (Pardo, Gibbons, Suppes, & Krauss, 2012), attitude toward the interlocutor (Abrego-Collier, Grove, & Sonderegger, 2011), conversational role (Pardo, Jay, & Krauss, 2010), model attractiveness (Babel, 2012), and even sexual orientation (Yu et al., 2011). Talkers apparently imitate both visual and auditory components of observed speech (Legerstee, 1990; R. Miller, Sanchez, & Rosenblum, 2010). Automatic vocal imitation processes may occur relatively continuously without any awareness by the vocalizing individual (or others) that they are occurring.

One common way of generating automatic vocal imitation in the laboratory is to have talkers listen to and then intentionally repeat just-heard speech (a task called shadowing). Listeners can voluntarily replicate speech with a delay as short as 150 ms (Porter & Lubker, 1980). Shadowing could be viewed as a case of rapid vocal imitation, but is more often described as word repetition. Rapid production of just-heard words supports the notion that perceived sounds may be automatically converted into articulatory commands (Skoyles, 1998). When a talker produces shadowed words in ways that are more similar to the just-heard words than to his or her spontaneous speech, then this is viewed as evidence that the talker has automatically imitated features of the just-heard words (Fowler, Brown, Sabadini, & Weihing, 2003; Honorof, Weihing, & Fowler, 2011; Kappes, Baumgaertner, Peschke, & Ziegler, 2009; Mitterer & Ernestus, 2008; Nielsen, 2011; Shockley, Sabadini, & Fowler, 2004). Goldinger (1998) found that immediately shadowed words were more likely to be judged by external evaluators as matching the just-heard sound than versions produced after a four second delay. He also found that when talkers shadowed uncommon words, their reproductions were more likely to be judged as matching the just-heard sound than when they shadowed common words. Similar effects have been observed in tasks in which talkers replicated unique word features that were encountered up to a week previously (Goldinger & Azuma, 2004; Nielsen, 2011). These findings suggest that the effects of automatic vocal imitation mechanisms on speech production may persist for long periods. Further evidence that experienced sounds may involuntarily affect vocal production comes from the earworm phenomenon, wherein a person involuntarily mentally or overtly rehearses a catchy tune that was previously encountered (Beaman & Williams, 2010; Halpern & Bartlett, 2011; Williamson et al., 2012).

People voluntarily shadow words when they are instructed to repeat them in laboratory studies, but there are cases in which individuals involuntarily shadow recently heard sounds in their environment, referred to as echolalia. Echolalia is commonly seen in people with autism and is also associated with several other disorders (Fay, 1969; Schuler, 1979; van Santen, Sproat, & Hill, 2013). It can involve either immediate or delayed reproduction of relatively complex sequences of speech sounds (Prizant & Rydell, 1984) or non-vocal sounds (Fay & Coleman, 1977; Filatova, Burdin, & Hoyt, 2010), and is often viewed as a contributing factor to dysfunctional language learning (Eigsti, de Marchena, Schuh, & Kelley, 2011). To date, detailed acoustic comparisons between heard speech and echolalic speech have not been performed, so the fidelity with which repeated sounds are copied is unclear.

Collectively, past studies of automatic vocal imitation demonstrate that humans sometimes reproduce features of previously experienced sounds without intending to do so and without being aware that they are copying heard features. Because automatic vocal imitation is often not apparent to the vocalizing individual and can occur after a significant delay, it may be more prevalent than is currently recognized. How automatic imitation relates to voluntary vocal imitation is a key question that researchers have grappled with for over a century.

Covert Imitation Suggests That Vocal Imitation May Enhance Perceptual Processing

Virtually all past discussions of vocal imitation assume that it is a process that primarily serves to enable an individual to produce certain sounds by reference to sounds previously heard. A recent alternative perspective is that imitative abilities may instead (or additionally) facilitate the prediction of future events (Grush, 2004; Hurley, 2008; Wilson & Knoblich, 2005). This perspective assumes that individuals are better able to perceive the actions of conspecifics if they can construct mental simulations of ongoing acts (including vocal acts) that occur in parallel with the perception of those acts. These mental simulations would be available to the individual perceiving the acts, but would not be evident in the observer’s behavior.

Covert vocal imitation is described as an automatic process in which a sound is represented, at least in part, in terms of the motor acts necessary to re-create the sound. The suggestion is that vocal imitative processing is not a rare event (as suggested by frameworks that only consider production of novel sounds to be evidence of imitation), but is instead a routine component of auditory processing. Echolalia is often interpreted as evidence that auditory processing normally engages an imitative process that would naturally lead to overt imitative acts if not for being actively inhibited (Fay & Coleman, 1977; Grossi, Marcone, Cinquegrana, & Gallucci, 2012). From this perspective, what is rare is for an organism to produce overt actions that reveal these representational processes—overt vocal imitation then becomes analogous to “thinking out loud.” Wilson and Knoblich (2005) suggest that vocal imitation serves not to enable the acquisition of new sounds, but rather as a perceptual process that uses “implicit knowledge of one’s own body mechanics as a mental model to track another person’s actions in real time” (p. 463). The advantage of such processing is that a listener can potentially fill in missing or ambiguous information and infer the trajectory of likely actions in the near future. In section five, we consider in more detail how such mental simulations may specifically contribute to audiospatial perception by cetaceans.

Voluntary Imitation Suggests That Vocal Imitation Can Be Consciously Controlled

Piaget (1962) was one of the first psychologists to collect empirical evidence that automatic vocal imitation abilities in human infants may provide a foundation for the later development of voluntary vocal imitation abilities. He strongly argued that vocal imitation was not an evolutionarily specialized ability. In fact, Piaget starts his book on imitation by stating that, “Imitation does not depend on an instinctive or hereditary technique . . . . the child learns to imitate” (1962, p. 5). Piaget proposed six successive stages in the development of voluntary vocal imitation in children: (1) vocal contagion, (2) interactive copying of sounds, (3) systematic rehearsal of sounds in the repertoire, (4) exploratory copying of novel sounds, (5) increased flexibility at imitating novel events, and (6) deferred imitation. Studies of vocal development in parrots led Pepperberg (2005) to suggest that parrots progress through similar stages of imitative development. She described three levels of vocal imitation proficiency, starting with the involuntary copying of sounds, followed by intentional production of copied sounds, which in some cases develops into more sophisticated, creative sound production including the recombination of familiar segments into new sounds.

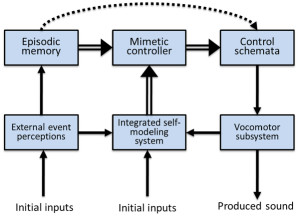

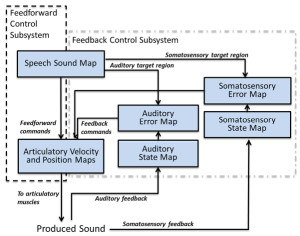

Relatively few researchers have theorized about the mechanisms or functions of vocal imitation in adult humans. Donald (1991) described vocal mimesis by adults as differing from vocal imitation in that it involves the invention of intentional representations as well as “the ability to produce conscious, self-initiated, representational acts that are intentional but not linguistic” (p. 168). He noted that vocal reproduction can serve communicative purposes, but may also function simply to represent an event to oneself. In his framework, vocal mimesis allows for the self-cued recall of previously perceived sounds, as well as the control of how those sounds might be transformed during reproductions; vocal acts that were initially involuntary (e.g., laughing) can be explicitly recalled and used intentionally, for instance in reenactments of past episodes or when acting out a scene. Donald proposed that the cognitive basis of vocal mimesis involves a combination of episodic memory abilities and “an extended conscious map of the body and its patterns of action, in an objective event space; and that event space must be superordinate to the representation of both the self and the external world” (p. 189). He describes the main outputs of this system as consisting of self-representations and episodic memories. Thus, his proposed mimetic system (Figure 2) builds on and encompasses an episodic memory system, which some describe as one of the most advanced cognitive systems in adult humans (Tulving, 2002).

Figure 2. Donald’s (1991) qualitative model of vocal mimesis in adult humans. The mimetic controller integrates episodic representations with outputs from self-representational systems to control how sounds are produced and to compare external events with self-produced actions.

The idea that episodic memory representations play a key role in vocal reproduction has also been discussed in relation to speech shadowing tasks (Goldinger, 1998). Goldinger proposed that each word exposure generates a memory trace that resonates with previously encoded traces of the same word. When there are fewer past traces in memory (i.e., the word is uncommon), resonance with the current word presentation is weak. As a result, the unique vocal characteristics of the just-heard word are more likely to be retained in the mental representation that drives the shadowing production plan. Unlike traditional descriptions of episodic memory, which assume that such memories are consciously accessed, Goldinger’s proposal implies that such memories may also automatically shape vocal production. Essentially, the idea is that memories of recent auditory episodes may continuously modulate how a listener vocalizes.

The capacity of adult humans to voluntarily copy sounds is best viewed as a cognitive skill that requires refined perceptual-motor control and planning abilities. Cognitive skills are abilities that an organism can improve through practice or observational learning that involve judgments or processing beyond what is involved in performing perceptual-motor responses (Anderson, 1982; Mercado, 2008; Rosenbaum, Carlson, & Gilmore, 2001). Relevant cognitive processes that may contribute to an adult’s vocal imitation skills include conscious maintenance and recall of past auditory or vocal episodes, selective attention to subcomponents of experienced and produced sounds, identification of specific goals of reproducing certain acoustic features, and awareness of possible benefits that can be attained through successful sound reproduction. From a cognitive perspective, an imitative vocal act is a memory-guided performance rather than a learning mechanism, and an individual’s ability to flexibly perform such acts will depend strongly on how that individual mentally represents both sounds and sound producing actions (Roitblat, 1982; Roitblat & von Fersen, 1992).

The most impressive vocal imitation abilities of adult humans involve voluntary, highly experience-dependent skills that are more reminiscent of soccer skills than of learning mechanisms. Soccer players can all walk, run, and judge the consequences of their motor acts, but these abilities are insufficient to make someone a professional soccer player. Similarly, the ability to make sounds, recognize similarities between sounds, and remember sounds are all necessary for vocal imitation, but these abilities do not make a person a professional impersonator. It would not make sense to say that a toddler uses his soccer abilities to learn how to walk, and it may similarly be questionable to say that a toddler uses his vocal imitation abilities to learn how to talk. What the toddler does in both cases is learn how to flexibly control his or her actions based on past experiences. S(he) gradually learns to voluntarily run and kick in strategically advantageous ways and also gradually learns to voluntarily produce sound features based on memories of past percepts and actions.

Synthesis

Past attempts to understand the nature of vocal imitation reflect the ways in which this phenomenon has been used as an explanatory construct. Psychologists have often noted the important role that vocal imitation may play in language learning, and consequently have emphasized how the availability and guidance of adult speakers may contribute to learning when infants copy their examples. Biologists have also stressed how vocal imitation can facilitate the vocal learning of communicative signals. Consequently, it is perhaps only natural that researchers have traditionally described vocal imitation as a learning mechanism. In contrast, it seems less likely that an adult human shadowing speech in a laboratory, or humming a tune while exiting a concert, is doing so to learn how to speak or to hum. Identifying when vocal imitation abilities are used provides hints about what those abilities may be for, but those hints may be misleading when only a subset of the relevant contexts are considered or when those abilities are difficult to observe. Without understanding the mechanisms that underlie sound imitation, and without any ability to monitor those mechanisms, it is simply not possible to definitively identify instances in which vocal imitation is occurring.

What then is vocal imitation? Clearly, different fields offer different ways of answering this question. Historically, animal learning researchers have described vocal imitation as the generalization of a conditioned response that is acquired through a supervised learning process. In this framework, acquisition of vocal imitation abilities (and consequent vocal communicative capacities) is subserved by general mechanisms of associative learning, rather than adaptively specialized vocal learning mechanisms. Animal behavior researchers, in contrast, have treated vocal imitation as a highly specialized adaptation that serves primarily to increase the flexibility with which animals can expand or customize their vocal repertoire. In this context, vocal imitation is the learning mechanism. Finally, cognitive psychologists construe vocal imitation as a consequence of multiple voluntary and involuntary representational processes. From the cognitive perspective, vocal imitation may help an organism learn, but this capacity can also be enlisted when no learning or vocalizing is occurring.

In the following, we use the term vocal imitation to refer to the vocal reenactment of previously experienced auditory events, essentially endorsing the framework developed by cognitive researchers studying vocal imitation in adult humans. Moreover, we claim that vocal imitation is a complex cognitive ability that involves coordinating action and perception. As such, vocal imitation can both be learned and in turn facilitate learning. The strength of this definition, and the cognitive approach more generally, is that it encompasses voluntary and automatic imitation, including covert imitation, and gives a clearer sense of the scope of cognitive processes that may contribute to vocal imitation abilities. A potential weakness of this definition is that it does not provide specific criteria for distinguishing imitative vocal acts from those that are non-imitative. As history has repeatedly shown, however, identifying such demarcation criteria is a formidable task, made all the more difficult by an incomplete understanding of the mechanisms underlying vocal imitation. Taxonomical distinctions may be useful for classifying different vocal phenomena, but it is less clear that they provide a viable framework for understanding what vocal imitation is or how it works. Instead, we focus on understanding how past experiences with various sounds enable some organisms to reproduce them. Because cognitive psychologists have studied vocal imitation most extensively in primates (primarily humans), we first consider the factors that determine when primates imitate sounds, as well as the features of sounds that primates are most likely to reproduce.

III. Sound Imitation by Human and Non-Human Primates

When considering the factors that constrain an individual’s ability to imitate sounds (or likelihood of doing so), a key question is: what makes a sound more or less imitatible? The answer to this question may vary across species and even within and across individuals of the same species. Wilson (2001b) defined imitatible stimuli as those for which an individual’s body can engage in an activity in which its configuration and movement can be mapped onto the configuration and movement of the stimulus, even if the mapping is not perfect and only applies to a limited set of properties of the stimulus. Most humans can easily imitate at least some speech sounds, but all other primates cannot. This has generally been interpreted as evidence that humans have unique capacities for imitating sounds. It remains possible, however, that sounds exist that at least some non-human primates might easily imitate, but that humans would find difficult or impossible to imitate. In the following, we suggest that a primate’s ability to imitate a particular sound depends, at least in part, on how the individual represents the sound and sound producing actions.

Imitating Sounds Non-Vocally

If vocal imitation is defined as the vocal reenactment of previously heard events, then sound imitation can be viewed as a generalization of this ability that includes both vocal and non-vocal reenactments. Past emphasis on understanding how vocal imitation enables individuals to learn to produce novel vocalizations has distracted attention away from instances in which organisms use non-vocal motor acts to reproduce sounds. According to Wilson’s (2001b) definition of imitatible stimuli, any sound-producing movements of an individual’s body that can be mapped onto features of heard sounds can potentially make that sound imitatible. Thus, it is important to consider all available sound producing body movements when evaluating the imitatibility of a sound for a particular species.

There have been several anecdotal reports of animals non-vocally reproducing environmental sounds such as percussive knocking (e.g., Witchell, 1896). This phenomenon has only recently been studied scientifically, however. Moore (1992) reported that a parrot (Psittacus erithacus) reproduced knocking sounds by drumming its head on objects after repeatedly observing a person hitting on a door. He later described this behavior as an instance of percussive mimicry, which he argued was a more sophisticated ability than vocal reproduction. Most reports of sound imitation by non-human primates involve non-vocal sound production (for rare exceptions, see Kojima, 2003; Masataka, 2003). Marshall, Wrangham, and Arcadi (1999) observed that chimpanzees (Pan troglodytes) exposed to a male that produced a Bronx cheer3 as part of his pant-hoot call subsequently began using this sound in their own calls. A captive orangutan (Pongo pygmaeus × Pongo abelii) independently learned to whistle and was able to match the duration and number of whistles produced by a human model (Lameira et al., 2013; Wich et al., 2009; see Figure 3a). Though performed with the mouth, whistling is a non-vocal motor act requiring fine control of lip positions and airflow. Most recently, infant chimpanzees have been shown to adopt particular non-vocal sound production techniques (kisses, lip smacks, Bronx cheers, teeth clacking) as attention-getting signals based on the techniques modeled by their mothers (Taglialatela, Reamer, Schapiro, & Hopkins, 2012). Chimpanzees also can be trained to produce such non-vocal sounds, suggesting that their ability to voluntarily generate novel sounds is more flexible than previously thought (Hopkins, Taglialatela, & Leavens, 2007; Russell, Hopkins, & Taglialatela, 2012).

The non-vocal sound imitation abilities of humans are often taken for granted in music education (Drake, 1993; Drake & Palmer, 2000; Palmer & Drake, 1997). For instance, a teacher may ask students to clap the rhythm of a song that they are learning to sing, or ask them to copy a demonstrated percussive pattern on various instruments. Conversely, most music students take ear-training classes that involve having to produce visually presented musical intervals vocally (called “sight-singing”), with the assumption that this ability will facilitate non-vocal reproduction of music. A musician that reproduces the melodic sequence produced by a singing bird or fellow musician when she plucks strings, presses piano keys, or uses air to make a reed vibrate, is also imitating the sounds non-vocally (Clarke, 1993; Clarke & Baker-Short, 1987). Many musicians learn to play songs “by ear,” which involves transforming heard sounds into the motor acts required to reproduce them (McPherson & Gabrielsson, 2002; Woody & Lehmann, 2010). Musicians and non-musicians can readily imitate the intonation patterns of sentences by moving a stylus on a tablet (d’Alessandro, Rilliard, & Le Beux, 2011). It is not clear anecdotally, either among human or non-human primates, that there is anything special about non-vocal reproduction of sounds relative to vocal imitation. The individuals appear to be reproducing sounds based on past experiences, regardless of whether the reenactment is produced through the voice or through some other means. In fact, the perceptual and cognitive demands appear to be comparable: the individual perceives a sound and then uses that sound as a guide for controlling motor acts that generate a similar event.

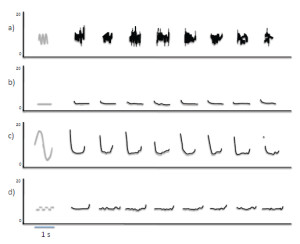

Figure 3. (a) Non-vocal sound imitation by an orangutan (adapted from Wich et al., 2009; Figure 2). Gray lines show spectrographic contours of whistles produced by a human, and black lines show the contours of subsequent whistles produced by the orangutan in which the number, timing, and duration of sounds are similar to features present in the target sequence. (b) Spontaneous vocal production by an infant chimpanzee (black lines show spectrographic contour and harmonics) with acoustic features similar to those of a preceding environmental sound (gray lines), indicative of vocal imitation (adapted from Kojima, 2003; Figure 9-2).

It is possible, however, that vocal and non-vocal sound imitation involve qualitatively different mechanisms. For instance, Moore (2004) argues that the parrot’s capacity for copying sounds percussively requires adaptations beyond those necessary for vocal imitation. In human studies, some have suggested that processing of different auditory events (e.g., melodies versus speech) may involve separate underlying mechanisms (Peretz & Coltheart, 2003; Zatorre & Baum, 2012; Zatorre, Belin, & Penhune, 2002), whereas others argue that there may be significant overlap (Mantell & Pfordresher, 2013; Patel, 2003; C. Price & Griffiths, 2005). Evidence supporting the view that vocal and non-vocal sound imitation can involve separate mechanisms was recently reported by Hutchins and Peretz (2012). In their study, participants who were classified as either accurate or poor-pitch singers matched pitch either vocally or manually by using a slider. The slider was used so that participants could continuously control pitch, as is the case for vocal pitch control, thus somewhat equating demands of pitch control across distinct effector systems. They found that pitch-matching errors in poor-pitch singers were voice specific. In other words, poor-pitch singers successfully matched pitch using the slider, but not using their voice. These results suggest that an individual’s ability to reproduce a pitch depends on the specific movements and associated feedback involved in matching the pitch.

For primates, sounds are imitatible when they are encoded in such a way that the stored representation of that sound enables the listener to voluntarily generate motor acts that produce phenomenological features present within the originally experienced sound. Note that by this criterion, any sound that a human hears is potentially imitatible, because the listener should be able to at least approximate the duration of the heard sound through some sound producing action. It is less clear which sounds would qualify as imitatible for other primates. Based on the currently available evidence, non-vocal sounds produced with the mouth seem to be relatively easy for chimpanzees and orangutans to reproduce, whereas vocal sounds are relatively easy for humans to imitate. Given that some sounds, such as those produced by conspecifics, will be easier to reproduce than other sounds, findings regarding which sounds (or features of sounds) are most imitatible can provide important clues about the factors that constrain imitation capacities within and across species.

Variations in the Imitatibility of Sounds

If sound imitation depends on adaptively specialized auditory-motor processing, then the sound features that should be easiest for an organism to imitate should be those present within functional vocalizations produced by conspecifics. Recent studies of humans provide some support for the hypothesis that vocal imitation is facilitated for natural vocalizations. For instance, matching of pitch is more accurate with a human voice timbre than a synthetic vocal timbre (Lévêque, Giovanni, & Schön, 2012; R. Moore, Estis, Gordon-Hickey, & Watts, 2008) or with a complex tone (Hutchins & Peretz, 2012; Watts & Hall, 2008). Adults also match pitch better when the vocal range of the target is closer to their range, as when female imitators match a female voice (H. E. Price, 2000). Similarly, children match pitch better when matching a child’s voice, and are better at matching pitch for a female than a male adult voice, given the greater similarity of female voice formants and pitch to a child’s voice (Green, 1990).

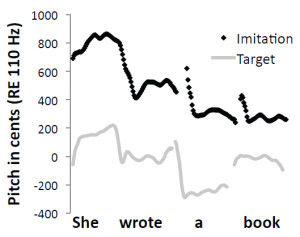

Figure 4. Pitch contours (shown as black dots) extracted from an adult human’s vocalizations when the individual was instructed to imitate a

target vocal sequence compared with spectral and temporal features of the target sequence (gray lines).

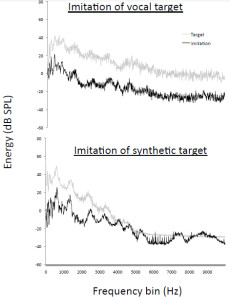

Mantell and Pfordresher (2013) recently explored differences in the vocal imitation of pitch within two cognitive domains: music (song) and language (speech). We summarize the results of this study here as a paradigmatic example of how vocal imitation can be influenced by stimulus structure, and of how the fidelity of imitations can be quantitatively assessed. According to the modular model of audition proposed by Peretz and Coltheart (2003), pitch processing occurs in domain-specific, information-encapsulated modules (Fodor, 1983) separate from speech processing. In a direct test of this framework, Mantell and Pfordresher compared the accuracy with which people intentionally imitated the pitch-time contents of spoken sentences and sung melodies. They created speech and song stimuli that matched in word content, pitch contour (the pattern of rising and falling pitch), pitch range, and syllable/note timing. The difference between the speech and song targets was that each note of the sung targets conformed to diatonic, musical tonal rules. Mantell and Pfordresher reasoned that if the pitch processing system underlying vocal imitation was truly modular, phonetic information should not influence imitative performance. Thus, the critical experimental factor was the presence or absence of phonetic information in the target sequences. They created wordless versions of all of the speech and song stimuli by synthesizing the pitch-time contents of each of the worded sequences. The wordless versions sounded like hummed versions of the sentences and songs, but they lacked all acoustic-phonetic identification cues. Imitation accuracy was gauged by directly comparing the target sequence with a temporally aligned imitative production (Figure 4) and by calculating two different quantitative measures of similarity (Figure 5). The first measure, mean absolute error, assessed the accuracy with which each imitative production matched the pitch content of the target. The second measure, pitch correlation, scrutinized the accuracy with which each imitative production tracked the relative (rising and falling) pitch-time contour of the target.

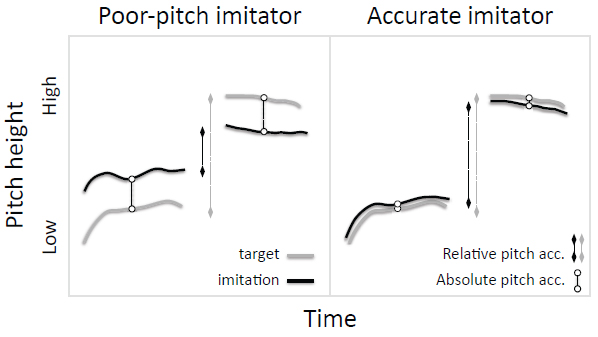

Figure 5. Poor-pitch imitators (left) produce vocalizations that do not match the target sounds in absolute or relative pitch, whereas typical adult humans (right) match both spectral features.

The critical finding of this study was that the presence of phonetic information in both the target and the imitative production reliably improved pitch accuracy. Thus, subjects imitated worded speech and song sequences more accurately than they imitated wordless speech and song sequences, despite the fact that the wordless versions were acoustically simpler (e.g., they lacked complex acoustic-phonetic spectral information). This finding is contrary to predictions afforded by a modular framework of music and speech processing, because if musical pitch processors are encapsulated to speech, then pitch processing should occur independently and unhindered (or not facilitated) by any parallel phonological processes. It also contradicts the proposal that imitation of spectrotemporal contours is inherently more difficult than imitation of other acoustic features (Janik & Slater, 2000). Mantell and Pfordresher further found that participants varied in their accuracy at imitating absolute and relative features of target sequences (see also Dalla Bella, Giguere, & Peretz, 2007; Pfordresher & Brown, 2007). Specifically, participants imitated the absolute pitch within songs better than the absolute pitch in sentences, but imitated the relative pitch-time contours of speech and song equally well.

In Mantell and Pfordresher’s (2013) study, participants imitated recordings of vocalizations and also synthesized versions of these recordings, making it possible to examine whether they adjusted the resonant properties of their vocal tract in order to imitate the timbre of targets. The synthesized recordings featured a timbre that resembled a human voice, but that differed considerably from the timbre of vocal recordings. Analyses of the long-term average spectra during imitations (Figure 6) suggested that participants adjusted their own vocal resonances in order to imitate the timbre of each target, even though this was not necessary according to instructions, which simply focused on the imitation of pitch content. As illustrated in Figure 4, participants also naturally matched the temporal structure of heard sequences, which was also not specifically requested in the instructions. Thus, when humans voluntarily imitate speech or song sequences, they spontaneously imitate multiple acoustic features of the sequences. Interestingly, when an orangutan imitated whistle sequences produced by a human (Wich et al., 2009), it also spontaneously matched the duration and temporal spacing of target sequences (Figure 3a), suggesting that this propensity is not limited to human imitators.

Figure 6. Long-term average spectra showing that adult humans spontaneously match the timbre of target sound sequences when targets are either natural or synthetic.

What Makes a Sound Imitatible?

As noted above, a basic question surrounding the imitatibility of sounds concerns whether, or to what degree, organisms have evolved dedicated systems that are specialized for imitating certain sound features. The imitatibility of sounds is not simply based on whether the acoustic properties of individual sounds resemble those of natural vocalizations. People are able to vocally reproduce melodies presented on a piano as well as those that are sung, and infant chimpanzees sometimes imitate environmental sounds (Figure 3b, Kojima, 2003). The complexity of a target sequence can strongly limit its imitatibility. At a cognitive level, different kinds of target sequences represent different auditory domains and may, according to some theories, be processed by different cognitive modules. Take for instance the difference between a sung melody on the syllable “la” versus a spoken sentence. Both are auditory sequences, but each is complex in its own way. Because the former sequence is heard as “musical,” it may be processed differently from the latter sequence. Such putative separation across domains may therefore influence imitatibility and, consequently, many human studies focus on the structural complexity of rhythmic, melodic, and phonic combinations rather than on the relative difficulty of producing individual sounds.

An important ancillary consideration when evaluating the imitatibility of sounds is the flexibility of vocal production by the imitator. Obviously, an individual who can imitate a wide range of inputs must be able to engage in flexible vocal motor control. Flexibility in pitch range increases dramatically during childhood, and thus may play a large role in the development of pitch matching abilities in singing (Welch, 1979). Similarly, poor-pitch singers, who exhibit a general deficit of vocal imitation, also exhibit an apparent lack of flexibility in vocal imitation (Pfordresher & Brown, 2007). Poor-pitch singers also show a larger advantage for matching pitch from recordings of their own voice, in contrast to matching the vocal pitch of other singers, than do more accurate singers (R. Moore et al., 2008; Pfordresher & Mantell, 2014). Finally, when transferring from the imitation of one sequence to another, poor-pitch singers show a greater tendency to perseverate the previously imitated pitch pattern than do more accurate singers (Wisniewski, Mantell, & Pfordresher, 2013). Interestingly, this apparent lack of flexibility in poor-pitch singers does not appear to be based on vocal motor control in that poor-pitch singers exhibit similar pitch range and ability to control a sustained pitch as accurate singers (Pfordresher & Brown, 2007; Pfordresher & Mantell, 2009). Instead, their inflexibility seems to result from dysfunctional vocal imitation abilities.

Even when considering only the performance of adult humans, there is no fixed scale of most-to-least imitatible sounds or sound features. Nevertheless, it may be possible to generate a gross scale of different properties associated with sounds being more or less imitatible. For instance, sound features that are imperceptible or sounds (and sequences) with complex, aperiodic, novel acoustic structures are typically more difficult to imitate, whereas sounds that are routinely self-generated tend to be the easiest to reproduce. Interestingly, this scale is the inverse of the criteria that biologists have developed for identifying instances of vocal imitation. Specifically, production of highly imitatible sounds is generally considered to be the least compelling behavioral evidence of vocal imitation, whereas production of novel complex sounds (which are often less imitatible) is currently considered to be the most compelling evidence. Consequently, the sounds that an individual is most likely to be proficient at imitating are also the sounds that scientists are least likely to consider relevant to studies of vocal imitation. In fact, in the taxonomy of vocal learning abilities proposed by Janik and Slater (2000), some sounds are inherently impossible to imitate; by their definitional criteria, an individual cannot imitate any sound that is already within the individual’s vocal repertoire. This constraint arises from the fact that they view vocal imitation as a learning mechanism. If vocal imitation is viewed as vocal reenactment, however, then individuals can potentially imitate any sound. This includes their own vocalizations, a process referred to as self-imitation (Pfordresher & Mantell, 2014; Repp & Williams, 1987; Vallabha & Tuller, 2004).

Studies of intentional vocal imitation in humans are beginning to shed new light on how sound imitatibility varies within and across individuals. They have yet to reveal, however, why sound imitatibility varies. If a person is particularly good at imitating a family member’s voice that is similar to his or her own, is this because the person possesses an adaptively specialized module that is tuned to the specific features of sounds produced by relatives? Is it because shared genetics have led to similar vocal organs? Or, is it because the person aspires to be like that family member and has practiced copying particular mannerisms of their role model’s vocal style over many years? To a large extent, the imitatibility of a sound depends on what resources the listener brings to bear for perceiving, encoding, and producing sounds. A clearer understanding of the physical and mental mechanisms relevant to increasing the imitatibility of sounds can be gained by examining those individuals who have reached the highest levels of performance—professional imitators.

Expertise in Sound Imitation

If, as we claim, voluntary imitation of sounds is a cognitive skill, then it should be possible to improve imitation abilities with training. However, if sound imitation is more of an innate capacity, then individual variations in ability should be less dependent on experience. Earlier claims that vocal imitation involves feedback-based error correction (Heyes, 1996; N. E. Miller & Dollard, 1941) predict that the fidelity with which particular sounds are imitated should increase incrementally as the number of comparisons between produced vocalizations and remembered targets increases. However, studies of the vocal imitation of pitch in singing have not shown any improvements across repeated trials in which participants attempted either to match the same pitch vocally (Hutchins & Peretz, 2012), or to repeatedly imitate the same spoken or sung sequence (Wisniewski et al., 2013). Likewise, efforts to enhance pitch imitation accuracy by having participants sing along with the correct sequence (auditory augmented feedback) have yielded mixed results and may even degrade the performance of poor-pitch singers (Hutchins, Zarate, Zatorre, & Peretz, 2010; Pfordresher & Brown, 2007; Wang, Yan, & Ng, 2012; Wise & Sloboda, 2008). It is clear anecdotally that individuals can improve their vocal imitation abilities through instruction and practice. However, simply relying on error correction based on auditory feedback may not suffice. More successful methods of augmented feedback involve showing the singer a graphical display of the imitated and target pitches as on-screen icons, with changes to sung pitch influencing the spatial proximity of these displays (Hoppe, Sadakate, & Desain, 2006).

Anecdotally, evidence that learning experiences can strongly determine sound imitation abilities comes from the performances of professional musicians, who often train and practice for decades to achieve the control necessary to produce particular sound qualities (e.g., features such as vibrato or breathiness). Often, musical training focuses on teaching students how to produce higher quality sound sequences. This generally means the student must learn to reproduce the features of sounds commonly produced by more proficient musicians. The fact that many professional musicians spend several hours a day performing exercises to maintain and enhance their musical skills attests to the important contributions of practice to their ability to flexibly and accurately reproduce sounds in a prescribed way.